Cynefin Framework, Statistics and Decision Analysis Report

VerifiedAdded on 2023/01/20

|28

|14674

|59

Report

AI Summary

This report, originating from the Simon French Risk Initiative and Statistical Consultancy Unit at the University of Warwick, delves into David Snowden's Cynefin framework and its relevance to statistical inference and decision analysis. It explores how the framework categorizes decision contexts, aiding in the selection of appropriate analytic and modeling methodologies. The report discusses Cynefin's application in various contexts, including knowledge management, the scientific method, and decision-making processes within knowable and complex domains. It also examines the relationship between scenario thinking and decision analysis. The author aims to provide benefits to analysts, clients, and academic researchers. The report offers insights into the interplay between decision-makers' knowledge of the external world, themselves, and the most suitable statistical, decision, and operational research analysis for their context. The report adds to many discussions of operational research (OR) methodology and the OR process that may be found in the literature, recasting parts of them into the Cynefin framework and drawing, I believe, some new insights, particularly in relation to the interplay between decision makers’ knowledge of the external world, themselves and the types of statistical, decision and OR analysis that may be most suited to their current context.

- 1 - 30/01/2012

Cynefin, Statistics and Decision Analysis

Simon French

Risk Initiative and Statistical Consultancy Unit

Department of Statistics

University of Warwick

Coventry, CV4 7AL

simon.french@warwick.ac.uk

January 2012

Abstract

David Snowden’s Cynefin framework, introduced to articulate discussions of sense-making,

knowledge management and organisational learning, also has much to offer discussion of

statistical inference and decision analysis. I explore its value, particularly in its ability to help

recognise which analytic and modelling methodologies are most likely to offer appropriate

support in a given context. The framework also offers a further perspective on the relationship

between scenario thinking and decision analysis in supporting decision makers.

Keywords: Cynefin; Bayesian statistics; decision analysis; decision support systems;

knowledge management; scenarios; scientific induction; sense-making;

statistical inference.

1 Introduction

Several years I attended a seminar on knowledge management given by David Snowden. At

this he described the Cynefin conceptual framework, which, inter alia, offers a categorisation

of decision contexts (Snowden, 2002). Initially I saw little advantage over other

categorisations of decisions, such as the strategy pyramid: viz. strategic, tactical and

operational (see Figure 2 below). However, Carmen Niculae had more insight and working

with her and others, I have appreciated Cynefin’s power to articulate discussions of inference

and decision making. Below, I explore Cynefin and its import for thinking about statistics

and decision analysis. There is nothing dramatic in anything I shall say. Many will have

reached similar conclusions. Perhaps also David Snowden will take this as a small apology

for my initial dismissal of his ideas.

Cynefin, Statistics and Decision Analysis

Simon French

Risk Initiative and Statistical Consultancy Unit

Department of Statistics

University of Warwick

Coventry, CV4 7AL

simon.french@warwick.ac.uk

January 2012

Abstract

David Snowden’s Cynefin framework, introduced to articulate discussions of sense-making,

knowledge management and organisational learning, also has much to offer discussion of

statistical inference and decision analysis. I explore its value, particularly in its ability to help

recognise which analytic and modelling methodologies are most likely to offer appropriate

support in a given context. The framework also offers a further perspective on the relationship

between scenario thinking and decision analysis in supporting decision makers.

Keywords: Cynefin; Bayesian statistics; decision analysis; decision support systems;

knowledge management; scenarios; scientific induction; sense-making;

statistical inference.

1 Introduction

Several years I attended a seminar on knowledge management given by David Snowden. At

this he described the Cynefin conceptual framework, which, inter alia, offers a categorisation

of decision contexts (Snowden, 2002). Initially I saw little advantage over other

categorisations of decisions, such as the strategy pyramid: viz. strategic, tactical and

operational (see Figure 2 below). However, Carmen Niculae had more insight and working

with her and others, I have appreciated Cynefin’s power to articulate discussions of inference

and decision making. Below, I explore Cynefin and its import for thinking about statistics

and decision analysis. There is nothing dramatic in anything I shall say. Many will have

reached similar conclusions. Perhaps also David Snowden will take this as a small apology

for my initial dismissal of his ideas.

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

- 2 - 30/01/2012

I hope it becomes clear that Cynefin offers benefits to several types of user: analysts can use

it to help identify what methodologies might be suitable for the problem faced by their

clients; their clients themselves can use it to gain insight into the qualities of the issues that

they face; and academic researchers may use it in exploring and categorising methodologies

within statistics, decision analysis and operational research. In this paper, my discussion

leans much towards the last of these three, though there are many elements that speak to all

three audiences. Thus this paper adds to many discussions of operational research (OR)

methodology and the OR process that may be found in the literature, recasting parts of them

into the Cynefin framework and drawing, I believe, some new insights, particularly in

relation to the interplay between decision makers’ knowledge of the external world,

themselves and the types of statistical, decision and OR analysis that may be most suited to

their current context (see, e.g., White, 1975; 1985; Mingers and Brocklesby, 1997; Mingers,

2003; Ormerod, 2008; Luoma et al., 2011).

In the next section I describe Cynefin, before turning in Section 3 to some specific

applications that I have found helpful in articulating discussion across range of statistical and

decision analytic contexts. In Section 4, I explore the relationship between knowledge

management and decision making. Knowledge and the process of inference are intimately

related; in Section 5, I explore some relationships between Cynefin, the Scientific Method

and statistical methodology; and in Section 6 I build on this to discuss decision analysis in the

knowable and complex domains. There I discuss how uncertainties might be addressed,

exploring a relationship between scenario thinking and formal decision analysis. Section 7

offers some brief conclusions.

2 Cynefin

So what is Cynefin? The name comes from the Welsh for ‘habitat’, at least in a narrow

translation. But Snowden (2002) suggests there are also connotations of acquaintance and

familiarity, quoting Kyffin Williams, a Welsh artist:

“(Cynefin) describes that relationship – the place of your birth and of your upbringing, the

environment in which you live and to which you are naturally acclimatised.”

The embodiment of such ideas as familiarity makes Cynefin clearly relevant to knowledge

management. Nonaka’s concept of Ba serves similarly: a place for interactions around

knowledge creation, management and use (Nonaka, 1991; 1999; Nonaka and Toyama, 2003).

Snowden distinguishes Cynefin from Ba through the Welsh word’s association with

I hope it becomes clear that Cynefin offers benefits to several types of user: analysts can use

it to help identify what methodologies might be suitable for the problem faced by their

clients; their clients themselves can use it to gain insight into the qualities of the issues that

they face; and academic researchers may use it in exploring and categorising methodologies

within statistics, decision analysis and operational research. In this paper, my discussion

leans much towards the last of these three, though there are many elements that speak to all

three audiences. Thus this paper adds to many discussions of operational research (OR)

methodology and the OR process that may be found in the literature, recasting parts of them

into the Cynefin framework and drawing, I believe, some new insights, particularly in

relation to the interplay between decision makers’ knowledge of the external world,

themselves and the types of statistical, decision and OR analysis that may be most suited to

their current context (see, e.g., White, 1975; 1985; Mingers and Brocklesby, 1997; Mingers,

2003; Ormerod, 2008; Luoma et al., 2011).

In the next section I describe Cynefin, before turning in Section 3 to some specific

applications that I have found helpful in articulating discussion across range of statistical and

decision analytic contexts. In Section 4, I explore the relationship between knowledge

management and decision making. Knowledge and the process of inference are intimately

related; in Section 5, I explore some relationships between Cynefin, the Scientific Method

and statistical methodology; and in Section 6 I build on this to discuss decision analysis in the

knowable and complex domains. There I discuss how uncertainties might be addressed,

exploring a relationship between scenario thinking and formal decision analysis. Section 7

offers some brief conclusions.

2 Cynefin

So what is Cynefin? The name comes from the Welsh for ‘habitat’, at least in a narrow

translation. But Snowden (2002) suggests there are also connotations of acquaintance and

familiarity, quoting Kyffin Williams, a Welsh artist:

“(Cynefin) describes that relationship – the place of your birth and of your upbringing, the

environment in which you live and to which you are naturally acclimatised.”

The embodiment of such ideas as familiarity makes Cynefin clearly relevant to knowledge

management. Nonaka’s concept of Ba serves similarly: a place for interactions around

knowledge creation, management and use (Nonaka, 1991; 1999; Nonaka and Toyama, 2003).

Snowden distinguishes Cynefin from Ba through the Welsh word’s association with

- 3 - 30/01/2012

community and shared history: for further discussion, see Nordberg (2006). Our concern will

be with how Cynefin

characterises various forms of uncertainty,

helps structure our thinking about statistical inference and the design of research studies,

relates to decision making, decision analysis and decision support, and

relates to our self-knowledge of our values – and values, it should be remembered,

should be “the driving force of our decision making” (Keeney, 1992).

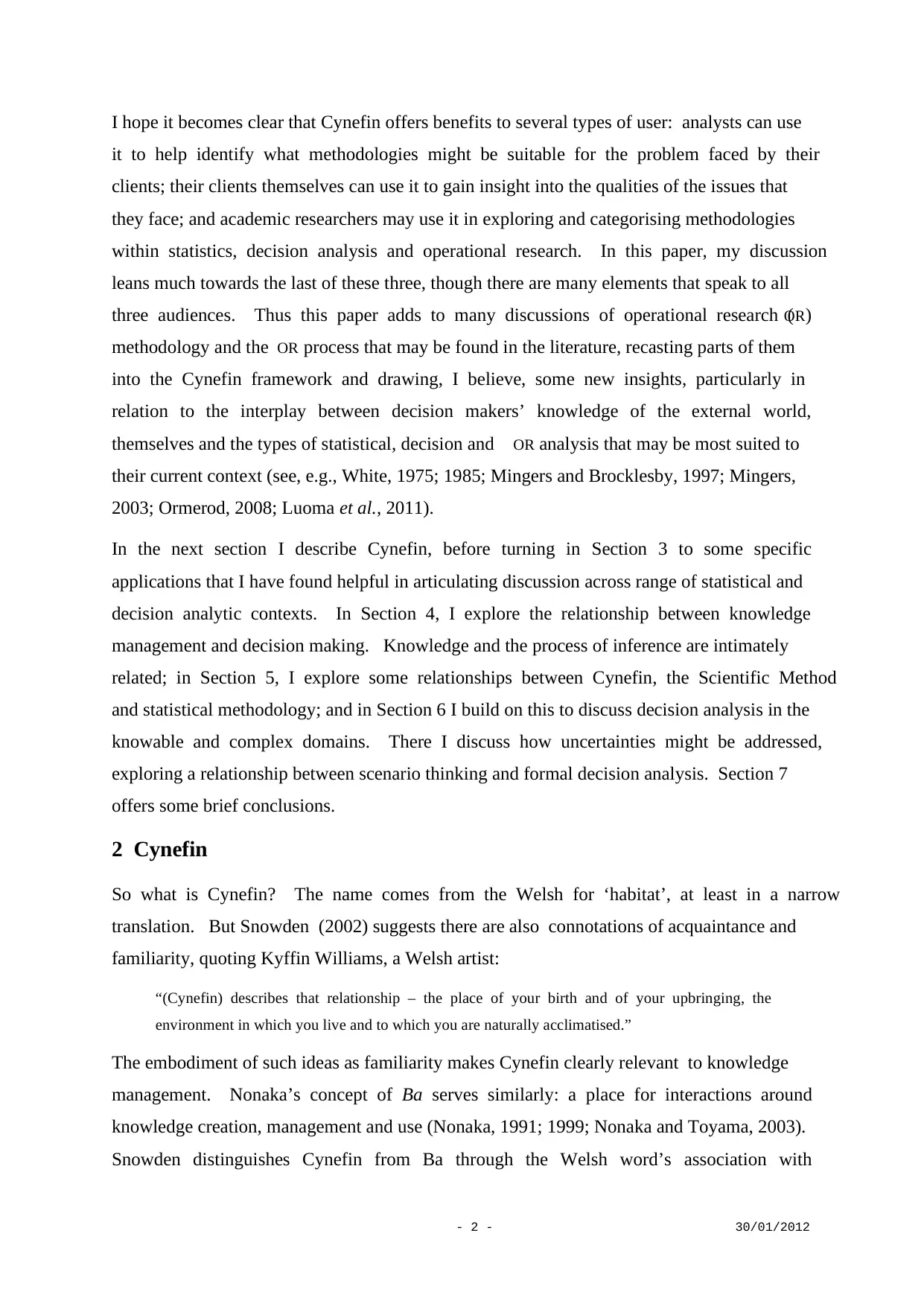

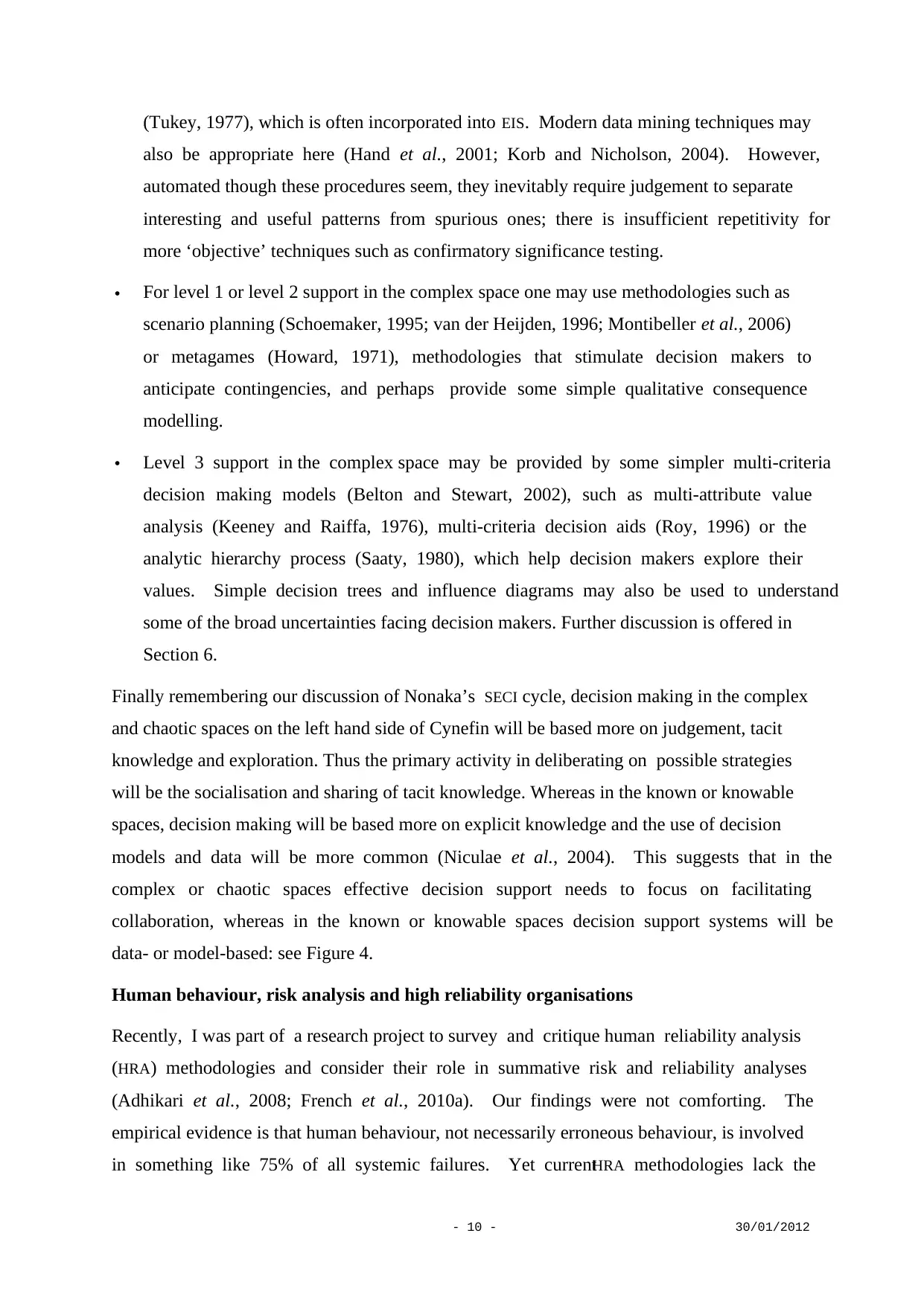

Snowden’s Cynefin model roughly divides decision contexts into four spaces: see Figure 1.

In the known space, also called simple order or the Realm of Scientific Knowledge, the

relationships between cause and effect are well understood. The known space contains those

contexts with which we are most familiar because they occur repeatedly; and because we

have repeated experience of them, we have learnt underlying relationships and behaviours

sufficiently well that all systems can be fully modelled. The consequences of any course of

action can be predicted with near certainty, and decision making tends to take the form of

recognising patterns and responding to them with well-rehearsed actions. Snowden describes

decision making in these cases as SENSE, CATEGORISE AND RESPOND (Kurtz and Snowden,

2003). Klein (1993) terms this recognition-primed decision making; French, Maule and

Papamichail (2009) term such decision making instinctive.

In the knowable space, also called complicated order or the Realm of Scientific Inquiry, cause

and effect relationships are generally understood, but for any specific decision there is a need

to gather and analyse further data to predict

the consequences of a course of action with

any certainty. Snowden characterises

decision making in this space as SENSE,

ANALYSE AND RESPOND. Decision analysis

and support require the fitting and use of

models to forecast the consequences of

actions with appropriate levels of uncertainty.

In this realm standard methods of operational

research and decision analysis apply (see,

e.g., Clemen and Reilly, 2004; Taha, 2006).

Cause and effect can

be determined with

sufficient data

Knowable

The Realm of

Scientific Inquiry

Complex

The Realm of Social Systems

Cause and effect may be

determined after the event

Chaotic

Cause and effect

not discernable

Known

The Realm of Scientific

Knowledge

Cause and effect understood

and predicable

Figure 1: Cynefin

community and shared history: for further discussion, see Nordberg (2006). Our concern will

be with how Cynefin

characterises various forms of uncertainty,

helps structure our thinking about statistical inference and the design of research studies,

relates to decision making, decision analysis and decision support, and

relates to our self-knowledge of our values – and values, it should be remembered,

should be “the driving force of our decision making” (Keeney, 1992).

Snowden’s Cynefin model roughly divides decision contexts into four spaces: see Figure 1.

In the known space, also called simple order or the Realm of Scientific Knowledge, the

relationships between cause and effect are well understood. The known space contains those

contexts with which we are most familiar because they occur repeatedly; and because we

have repeated experience of them, we have learnt underlying relationships and behaviours

sufficiently well that all systems can be fully modelled. The consequences of any course of

action can be predicted with near certainty, and decision making tends to take the form of

recognising patterns and responding to them with well-rehearsed actions. Snowden describes

decision making in these cases as SENSE, CATEGORISE AND RESPOND (Kurtz and Snowden,

2003). Klein (1993) terms this recognition-primed decision making; French, Maule and

Papamichail (2009) term such decision making instinctive.

In the knowable space, also called complicated order or the Realm of Scientific Inquiry, cause

and effect relationships are generally understood, but for any specific decision there is a need

to gather and analyse further data to predict

the consequences of a course of action with

any certainty. Snowden characterises

decision making in this space as SENSE,

ANALYSE AND RESPOND. Decision analysis

and support require the fitting and use of

models to forecast the consequences of

actions with appropriate levels of uncertainty.

In this realm standard methods of operational

research and decision analysis apply (see,

e.g., Clemen and Reilly, 2004; Taha, 2006).

Cause and effect can

be determined with

sufficient data

Knowable

The Realm of

Scientific Inquiry

Complex

The Realm of Social Systems

Cause and effect may be

determined after the event

Chaotic

Cause and effect

not discernable

Known

The Realm of Scientific

Knowledge

Cause and effect understood

and predicable

Figure 1: Cynefin

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

- 4 - 30/01/2012

In the complex space, also called complex unorder or the Realm of Social Systems, decision

making situations involve many interacting causes and effects. Knowledge in this space is at

best qualitative: there are too many potential interactions to disentangle particular causes and

effects. Every situation has unique elements: some unfamiliarity. There are no precise

quantitative models to predict system behaviours such as in the known and knowable spaces.

Decision analysis is still possible, but its style will be broader, with less emphasis on details.

Decision support will be more focused on exploring judgement and issues, and on developing

broad strategies that are sufficiently flexible to accommodate evolving situations. Snowden

suggests that in these circumstances decision making will be more of the form: PROBE,

SENSE, AND RESPOND . Analysis begins, and perhaps ends, with informal qualitative models,

known as soft modelling, soft OR or problem structuring methods (Rosenhead and Mingers,

2001; Mingers and Rosenhead, 2004; Pidd, 2004; Franco et al., 2006; Shaw et al., 2007). If

quantitative models are used, then they are simple, perhaps linear multi-attribute value

models (Belton and Stewart, 2002). One point of terminology should be noted: namely, this

difficulty of understanding cause and effect can occur in environmental, biological and other

contexts as much as in social systems.

In discussing the complex space, one should be careful to avoid confusion with complexity

science. While some complexity science does relate to Snowden’s complex space, it is more

concerned with computational issues relating to very complicated models. Such models and

computational issues belong more to Snowden’s knowable and known spaces rather than the

complex one. Models, however complicated, seek to encode known understandings of cause

and effect. The difficulty is that, though causes and effects, correlations and non-linearities

are understood, their great number makes it difficult, if not intractable to compute the

predicted effects of a set of causes.

In the chaotic space, also called chaotic unorder, situations involve events and behaviours

beyond current experience with no obvious candidates for cause and effect. Decision making

cannot be based upon analysis because there are no concepts of how to separate entities and

predict their interactions. Decision makers will need to take probing actions and see what

happens, until they can make some sort of sense of the situation, gradually drawing the

context back into one of the other spaces. Snowden characterises such decision making as

ACT, SENSE AND RESPOND : more prosaically, ‘trial and error’ or even ‘poke it and see what

happens!’

Donald Rumsfeld famously said:

In the complex space, also called complex unorder or the Realm of Social Systems, decision

making situations involve many interacting causes and effects. Knowledge in this space is at

best qualitative: there are too many potential interactions to disentangle particular causes and

effects. Every situation has unique elements: some unfamiliarity. There are no precise

quantitative models to predict system behaviours such as in the known and knowable spaces.

Decision analysis is still possible, but its style will be broader, with less emphasis on details.

Decision support will be more focused on exploring judgement and issues, and on developing

broad strategies that are sufficiently flexible to accommodate evolving situations. Snowden

suggests that in these circumstances decision making will be more of the form: PROBE,

SENSE, AND RESPOND . Analysis begins, and perhaps ends, with informal qualitative models,

known as soft modelling, soft OR or problem structuring methods (Rosenhead and Mingers,

2001; Mingers and Rosenhead, 2004; Pidd, 2004; Franco et al., 2006; Shaw et al., 2007). If

quantitative models are used, then they are simple, perhaps linear multi-attribute value

models (Belton and Stewart, 2002). One point of terminology should be noted: namely, this

difficulty of understanding cause and effect can occur in environmental, biological and other

contexts as much as in social systems.

In discussing the complex space, one should be careful to avoid confusion with complexity

science. While some complexity science does relate to Snowden’s complex space, it is more

concerned with computational issues relating to very complicated models. Such models and

computational issues belong more to Snowden’s knowable and known spaces rather than the

complex one. Models, however complicated, seek to encode known understandings of cause

and effect. The difficulty is that, though causes and effects, correlations and non-linearities

are understood, their great number makes it difficult, if not intractable to compute the

predicted effects of a set of causes.

In the chaotic space, also called chaotic unorder, situations involve events and behaviours

beyond current experience with no obvious candidates for cause and effect. Decision making

cannot be based upon analysis because there are no concepts of how to separate entities and

predict their interactions. Decision makers will need to take probing actions and see what

happens, until they can make some sort of sense of the situation, gradually drawing the

context back into one of the other spaces. Snowden characterises such decision making as

ACT, SENSE AND RESPOND : more prosaically, ‘trial and error’ or even ‘poke it and see what

happens!’

Donald Rumsfeld famously said:

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

- 5 - 30/01/2012

“There are known knowns; there are things we know we know. We also know there are known

unknowns; that is to say we know there are some things we do not know. But there are also

unknown unknowns - the ones we don't know we don't know.”

He missed a category: ‘unknown knowns’. ‘Known knowns’ corresponds to knowledge in

the known space; ‘known unknowns’ to that in the knowable space; and ‘unknown

unknowns’ to that in the chaotic space. ‘Unknown knowns’ would correspond to our

knowledge in the complex space, in which we know candidates for causes and effects, but not

their full relationships.

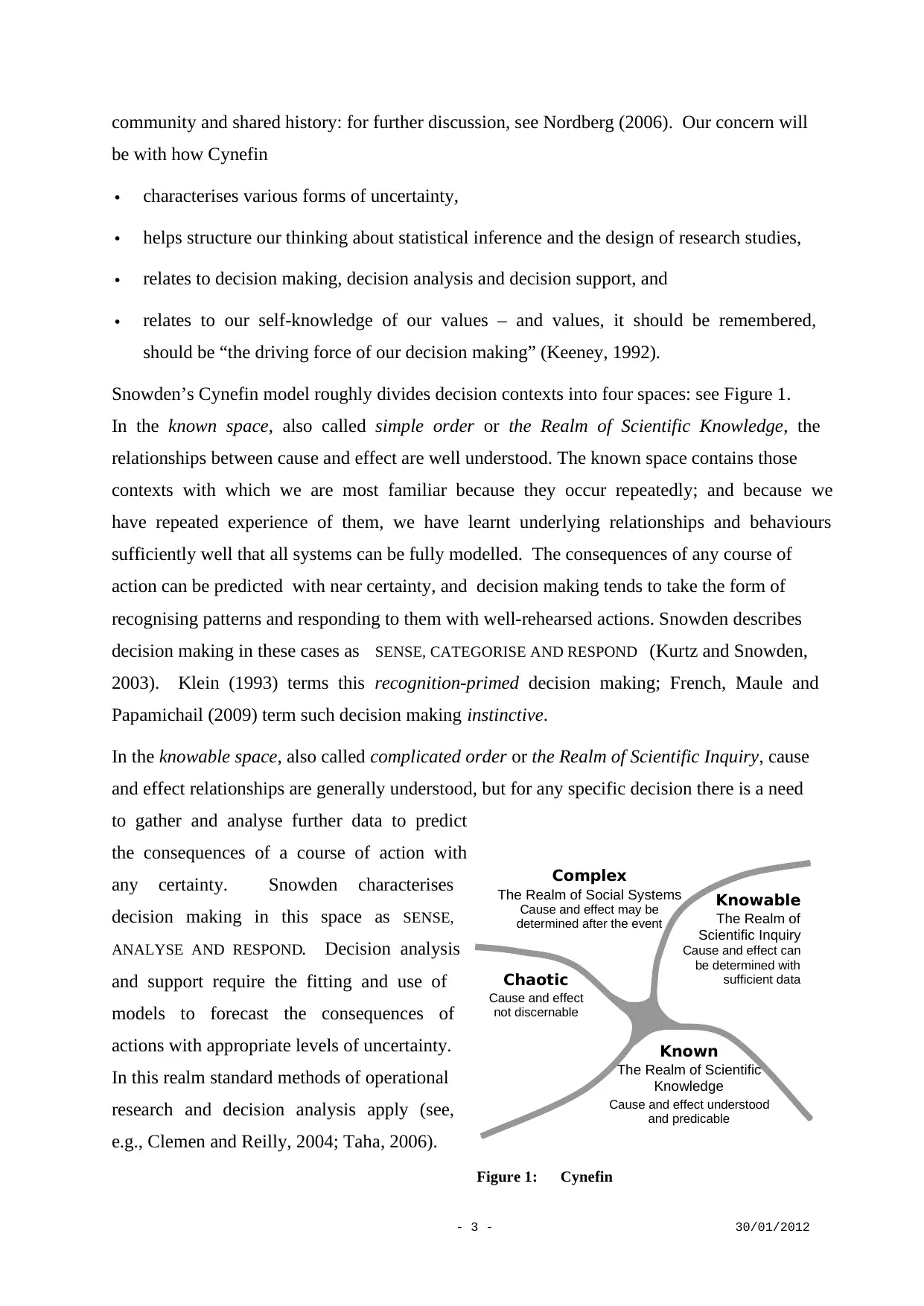

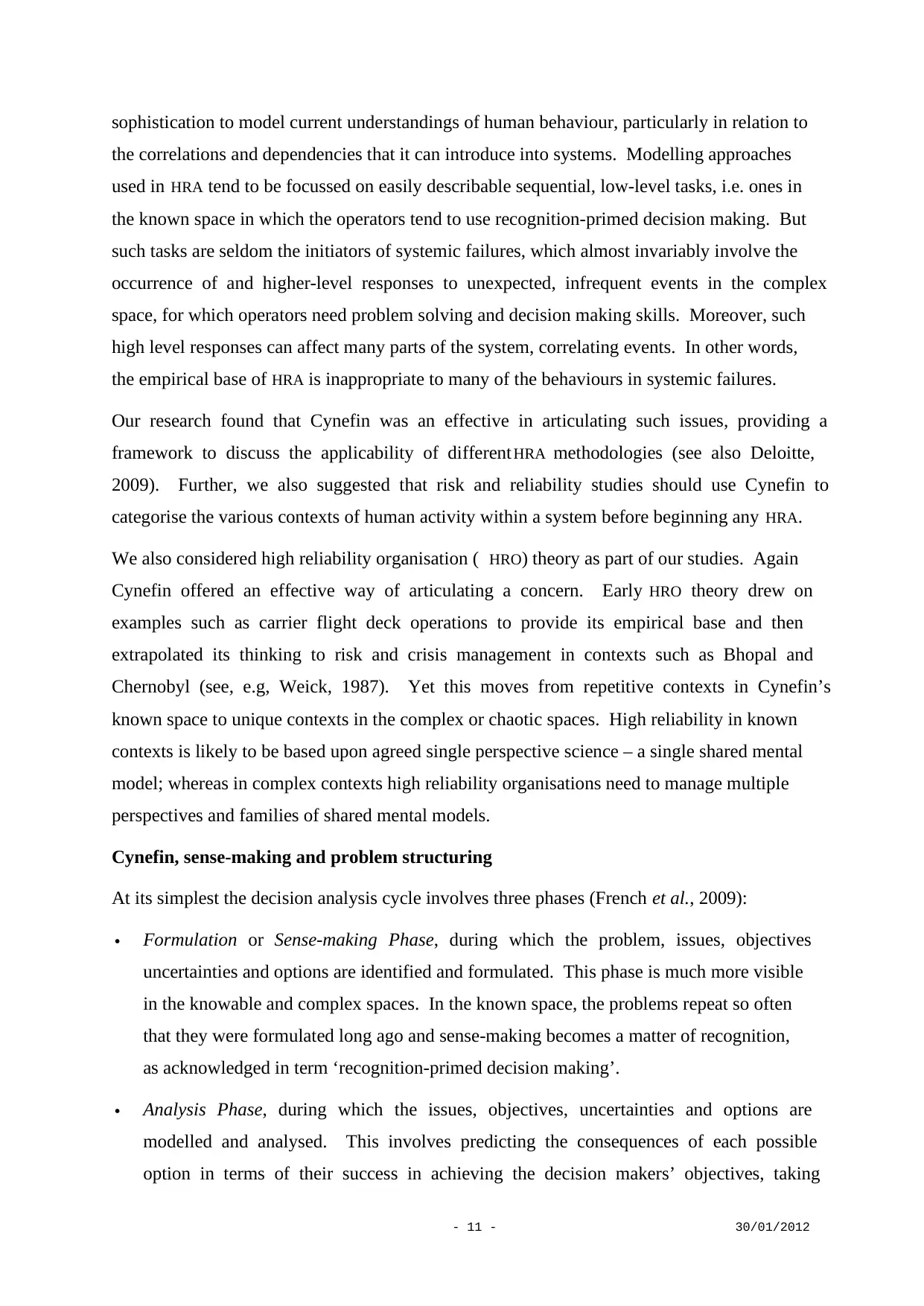

Cynefin has parallels with the strategy pyramid: see Figure 2. In this the strategy pyramid has

been extended from its more common trichotomy of operational, tactical and strategic

decisions by including a fourth category of instinctive or recognition-primed decisions

(French et al., 2009). Strategic decisions set a broad direction, a framework in which more

detailed tactical and operational decisions may be taken. In delivering operational decisions,

many much smaller decisions have to be taken. These are the instinctive, recognition-primed

ones. Simon (1960) noted that strategic decisions tend to be associated with unstructured,

unfamiliar problems. Indeed, strategic decisions often have to be taken in the face of such a

myriad of ill-perceived issues, uncertainties and ill-defined objectives that Ackoff (1974)

dubbed such situations messes. There is a clear alignment of the context of strategic decision

making and the complex and even chaotic spaces of Cynefin. Tactical, operational and

instinctive decision contexts have increasing familiarity and structure, and occur with

increasing frequency. Again the alignment with Cynefin is clear. Jacques (1989)

distinguished four domains of activity, and hence decision making, within organisations: the

corporate strategic, general, operational and hands-on work. French et al. (2009) relate

these directly to the strategic, tactical, operational and instinctive categories in the extended

Strategic

Tactical

Operational

Instinctive

(recognition primed)

Knowable

Complex

Chaotic

Tactical

Strategic

Known

Instinctive

Operational

Figure 2: Relationship between the perspectives offered by the strategy pyramid and Cynefin

“There are known knowns; there are things we know we know. We also know there are known

unknowns; that is to say we know there are some things we do not know. But there are also

unknown unknowns - the ones we don't know we don't know.”

He missed a category: ‘unknown knowns’. ‘Known knowns’ corresponds to knowledge in

the known space; ‘known unknowns’ to that in the knowable space; and ‘unknown

unknowns’ to that in the chaotic space. ‘Unknown knowns’ would correspond to our

knowledge in the complex space, in which we know candidates for causes and effects, but not

their full relationships.

Cynefin has parallels with the strategy pyramid: see Figure 2. In this the strategy pyramid has

been extended from its more common trichotomy of operational, tactical and strategic

decisions by including a fourth category of instinctive or recognition-primed decisions

(French et al., 2009). Strategic decisions set a broad direction, a framework in which more

detailed tactical and operational decisions may be taken. In delivering operational decisions,

many much smaller decisions have to be taken. These are the instinctive, recognition-primed

ones. Simon (1960) noted that strategic decisions tend to be associated with unstructured,

unfamiliar problems. Indeed, strategic decisions often have to be taken in the face of such a

myriad of ill-perceived issues, uncertainties and ill-defined objectives that Ackoff (1974)

dubbed such situations messes. There is a clear alignment of the context of strategic decision

making and the complex and even chaotic spaces of Cynefin. Tactical, operational and

instinctive decision contexts have increasing familiarity and structure, and occur with

increasing frequency. Again the alignment with Cynefin is clear. Jacques (1989)

distinguished four domains of activity, and hence decision making, within organisations: the

corporate strategic, general, operational and hands-on work. French et al. (2009) relate

these directly to the strategic, tactical, operational and instinctive categories in the extended

Strategic

Tactical

Operational

Instinctive

(recognition primed)

Knowable

Complex

Chaotic

Tactical

Strategic

Known

Instinctive

Operational

Figure 2: Relationship between the perspectives offered by the strategy pyramid and Cynefin

- 6 - 30/01/2012

strategy pyramid, and hence they also relate to Cynefin as in the curved arrow in Figure 2.

Note, however, that I do not claim precise identification of the chaotic, complex, knowable

and known spaces with strategic, tactical, operational and instinctive decision making

contexts. While the appropriate domain for instinctive decision making may lie entirely

within the known space, operational, tactical and strategic decision making do not align quite

so neatly, overlapping adjacent spaces. Indeed, the boundaries between the four spaces in

Cynefin should not be taken as hard. The interpretation is much softer with recognition that

there are no clear cut boundaries and, say, some contexts in the knowable space may have a

minority of characteristics more appropriate to the complex.

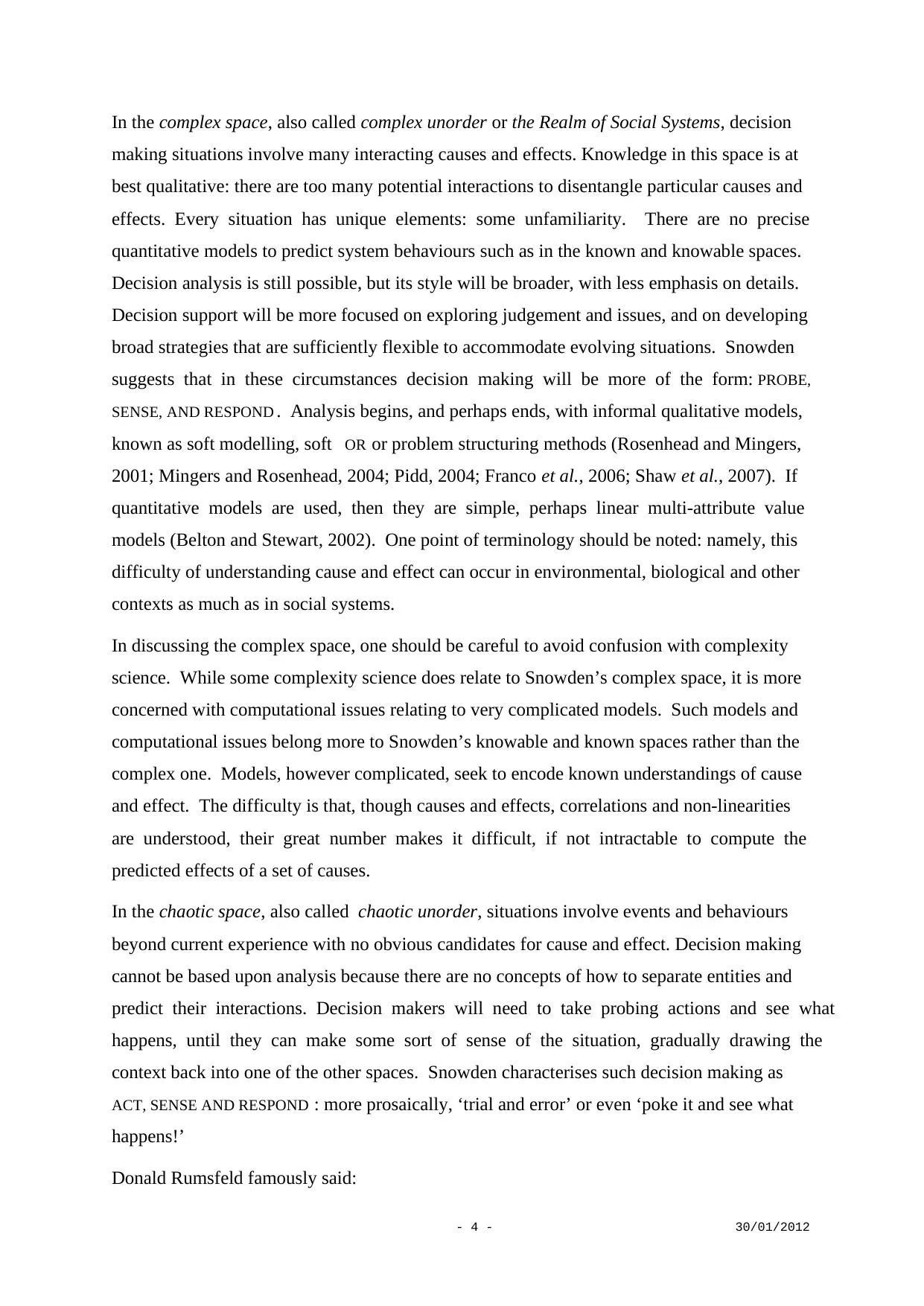

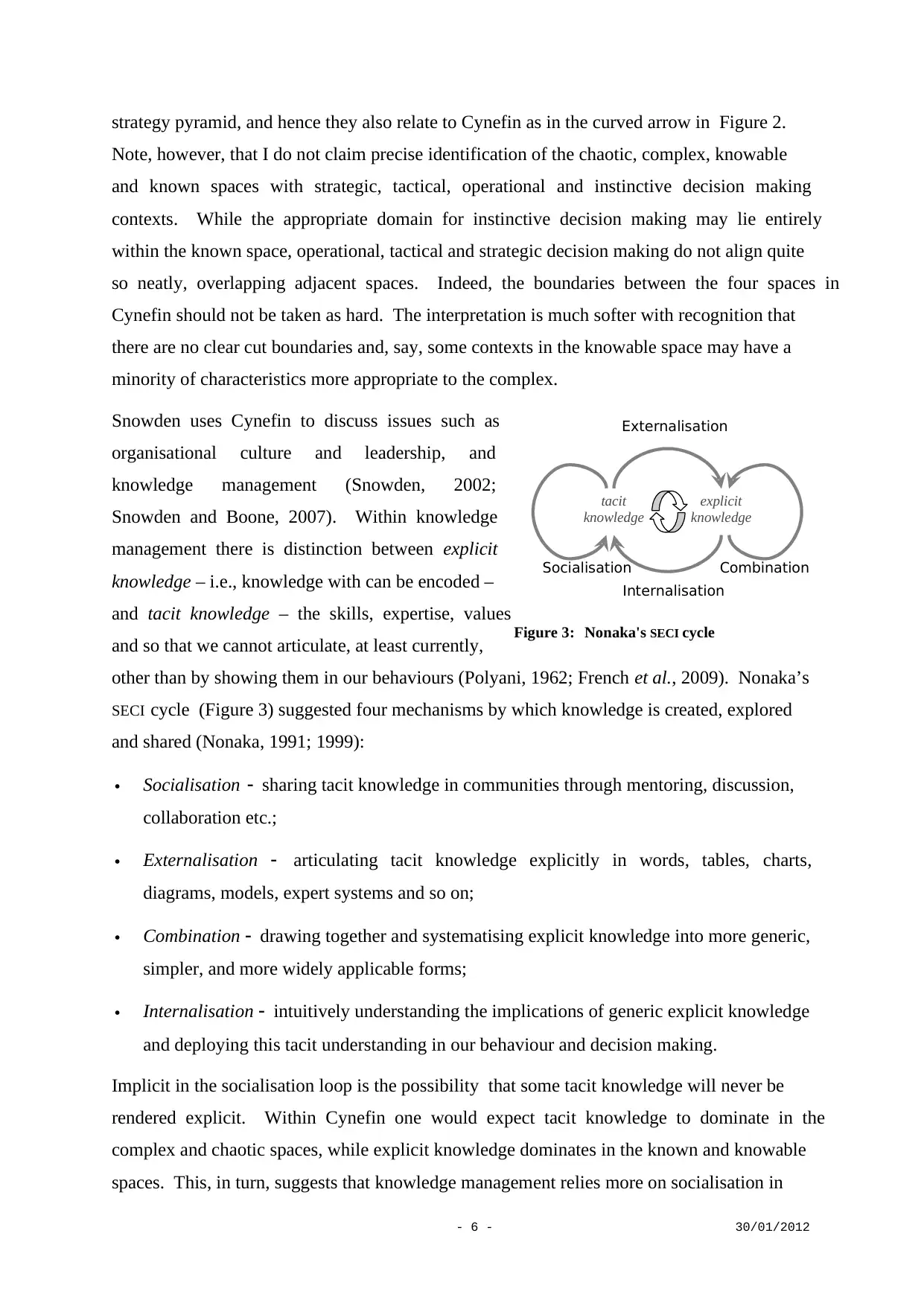

Snowden uses Cynefin to discuss issues such as

organisational culture and leadership, and

knowledge management (Snowden, 2002;

Snowden and Boone, 2007). Within knowledge

management there is distinction between explicit

knowledge – i.e., knowledge with can be encoded –

and tacit knowledge – the skills, expertise, values

and so that we cannot articulate, at least currently,

other than by showing them in our behaviours (Polyani, 1962; French et al., 2009). Nonaka’s

SECI cycle (Figure 3) suggested four mechanisms by which knowledge is created, explored

and shared (Nonaka, 1991; 1999):

Socialisation sharing tacit knowledge in communities through mentoring, discussion,

collaboration etc.;

Externalisation articulating tacit knowledge explicitly in words, tables, charts,

diagrams, models, expert systems and so on;

Combination drawing together and systematising explicit knowledge into more generic,

simpler, and more widely applicable forms;

Internalisation intuitively understanding the implications of generic explicit knowledge

and deploying this tacit understanding in our behaviour and decision making.

Implicit in the socialisation loop is the possibility that some tacit knowledge will never be

rendered explicit. Within Cynefin one would expect tacit knowledge to dominate in the

complex and chaotic spaces, while explicit knowledge dominates in the known and knowable

spaces. This, in turn, suggests that knowledge management relies more on socialisation in

tacit

knowledge

explicit

knowledge

Socialisation

Internalisation

Combination

Externalisation

Figure 3: Nonaka's SECI cycle

strategy pyramid, and hence they also relate to Cynefin as in the curved arrow in Figure 2.

Note, however, that I do not claim precise identification of the chaotic, complex, knowable

and known spaces with strategic, tactical, operational and instinctive decision making

contexts. While the appropriate domain for instinctive decision making may lie entirely

within the known space, operational, tactical and strategic decision making do not align quite

so neatly, overlapping adjacent spaces. Indeed, the boundaries between the four spaces in

Cynefin should not be taken as hard. The interpretation is much softer with recognition that

there are no clear cut boundaries and, say, some contexts in the knowable space may have a

minority of characteristics more appropriate to the complex.

Snowden uses Cynefin to discuss issues such as

organisational culture and leadership, and

knowledge management (Snowden, 2002;

Snowden and Boone, 2007). Within knowledge

management there is distinction between explicit

knowledge – i.e., knowledge with can be encoded –

and tacit knowledge – the skills, expertise, values

and so that we cannot articulate, at least currently,

other than by showing them in our behaviours (Polyani, 1962; French et al., 2009). Nonaka’s

SECI cycle (Figure 3) suggested four mechanisms by which knowledge is created, explored

and shared (Nonaka, 1991; 1999):

Socialisation sharing tacit knowledge in communities through mentoring, discussion,

collaboration etc.;

Externalisation articulating tacit knowledge explicitly in words, tables, charts,

diagrams, models, expert systems and so on;

Combination drawing together and systematising explicit knowledge into more generic,

simpler, and more widely applicable forms;

Internalisation intuitively understanding the implications of generic explicit knowledge

and deploying this tacit understanding in our behaviour and decision making.

Implicit in the socialisation loop is the possibility that some tacit knowledge will never be

rendered explicit. Within Cynefin one would expect tacit knowledge to dominate in the

complex and chaotic spaces, while explicit knowledge dominates in the known and knowable

spaces. This, in turn, suggests that knowledge management relies more on socialisation in

tacit

knowledge

explicit

knowledge

Socialisation

Internalisation

Combination

Externalisation

Figure 3: Nonaka's SECI cycle

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

- 7 - 30/01/2012

the complex and chaotic spaces and on combination in the known and knowable spaces.

Indeed, behaviour in the known and knowable space builds on scientific knowledge, the

archetypal example of combined explicit knowledge, i.e. scientific models and theories.

What does Cynefin bring to discussions of decision making? I do not claim that any of the

following could not be – indeed, has not been – discussed without the structure of Cynefin:

e.g., see Brundtland (1987). However, Cynefin does seem to facilitate such discussions well,

perhaps because it simultaneously addresses knowledge and decision making. In the next

section I illustrate this point with a number of applications.

3 Illustrations of how Cynefin can articulate issues and concerns in a

variety of applications.

[For many further examples and discussions of applications of Cynefin, see

http://www.cognitive-edge.com/.]

An interpretation of some of the issues in emergency management

Emergency management provided the first example to convince me of the power of Cynefin

to articulate and communicate issues. Looking at many past instances of the handling of

large scale emergencies, it was apparent to Carmen Niculae and me that the authorities,

despite addressing the physical aspects of the emergency well, often lost the confidence of

the public. We found that we could articulate the dynamics of an emergency intuitively using

Cynefin. Essentially, the authorities think that they are handing an event in the known or

knowable spaces, whereas associated socio-political-economic issues may pull the emergency

into the complex space. There is a dislocation between the authorities’ perception of the

situation and reality (French and Niculae, 2005). In the heat of a crisis the imperative is to do

all one physically can to save and protect life and to remove the source of the danger. But

many are affected in different, non-physical ways. Justifiable concerns and stresses build:

individuals fear for or mourn loved ones, and as do communities; ways of life are changed

temporally, perhaps permanently; economic effects occur and can quickly impact some

groups disproportionately; etc. Stresses and concerns grow rapidly (Barnett and Breakwell,

2003; Kasperson et al., 2003), outstripping the resources devoted to community care.

In the early phase of the Chernobyl Accident, the decision context could be placed in the

knowable space: causes and effects were understood, although there were gross uncertainties

about the source term and the distribution of the contamination. Successive post-accident

the complex and chaotic spaces and on combination in the known and knowable spaces.

Indeed, behaviour in the known and knowable space builds on scientific knowledge, the

archetypal example of combined explicit knowledge, i.e. scientific models and theories.

What does Cynefin bring to discussions of decision making? I do not claim that any of the

following could not be – indeed, has not been – discussed without the structure of Cynefin:

e.g., see Brundtland (1987). However, Cynefin does seem to facilitate such discussions well,

perhaps because it simultaneously addresses knowledge and decision making. In the next

section I illustrate this point with a number of applications.

3 Illustrations of how Cynefin can articulate issues and concerns in a

variety of applications.

[For many further examples and discussions of applications of Cynefin, see

http://www.cognitive-edge.com/.]

An interpretation of some of the issues in emergency management

Emergency management provided the first example to convince me of the power of Cynefin

to articulate and communicate issues. Looking at many past instances of the handling of

large scale emergencies, it was apparent to Carmen Niculae and me that the authorities,

despite addressing the physical aspects of the emergency well, often lost the confidence of

the public. We found that we could articulate the dynamics of an emergency intuitively using

Cynefin. Essentially, the authorities think that they are handing an event in the known or

knowable spaces, whereas associated socio-political-economic issues may pull the emergency

into the complex space. There is a dislocation between the authorities’ perception of the

situation and reality (French and Niculae, 2005). In the heat of a crisis the imperative is to do

all one physically can to save and protect life and to remove the source of the danger. But

many are affected in different, non-physical ways. Justifiable concerns and stresses build:

individuals fear for or mourn loved ones, and as do communities; ways of life are changed

temporally, perhaps permanently; economic effects occur and can quickly impact some

groups disproportionately; etc. Stresses and concerns grow rapidly (Barnett and Breakwell,

2003; Kasperson et al., 2003), outstripping the resources devoted to community care.

In the early phase of the Chernobyl Accident, the decision context could be placed in the

knowable space: causes and effects were understood, although there were gross uncertainties

about the source term and the distribution of the contamination. Successive post-accident

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

- 8 - 30/01/2012

strategies, which continued to be based upon assumptions belonging to the known and

knowable spaces, focused on technical issues of radiation protection and neglected the

enormous social and cultural harm that the accident was causing (International Atomic

Energy Agency, 1991; Karaoglou et al., 1996; French et al., 2009). Thus, the context passed

into the complex space, but for a period was managed as if it were in the known or knowable

domains. This dislocation led to affected communities questioning and essentially rejecting

all the authorities’ protective and recovery measures. Eventually socio-economic issues were

addressed. For instance, the ETHOS project applied an approach which explored social and

cultural understandings along with more technical perspectives through multi-disciplinary

teams and strong involvement of the local population to rebuild a good overall quality of life

(Heriard Dubreuil et al., 1999).

The same issues can be discerned in the handling of many crises: e.g. Three Mile Island,

Mad-Cow Disease, and Hurricane Katrina (Niculae, 2005). Indeed, as I write this, BP is

being pilloried for its mismanagement of the Gulf Oil Spill and, admittedly before all the

evidence is published, I cannot help reflect that they may have myopically concentrated on

the technical issues of sealing the well-head, issues largely in the known and knowable

spaces, and missed the socio-economic and cultural impact, both actual and feared, that the

spill was creating, issues that clearly lie in the complex space. Such issues have led many to

argue for a more coherent socio-technical approach to emergencies in which the authorities

embrace and address all the public’s concerns throughout the response and not just recovery

phase (Fischhoff, 1995; Mumford, 2003; French et al., 2005; French and Niculae, 2005).

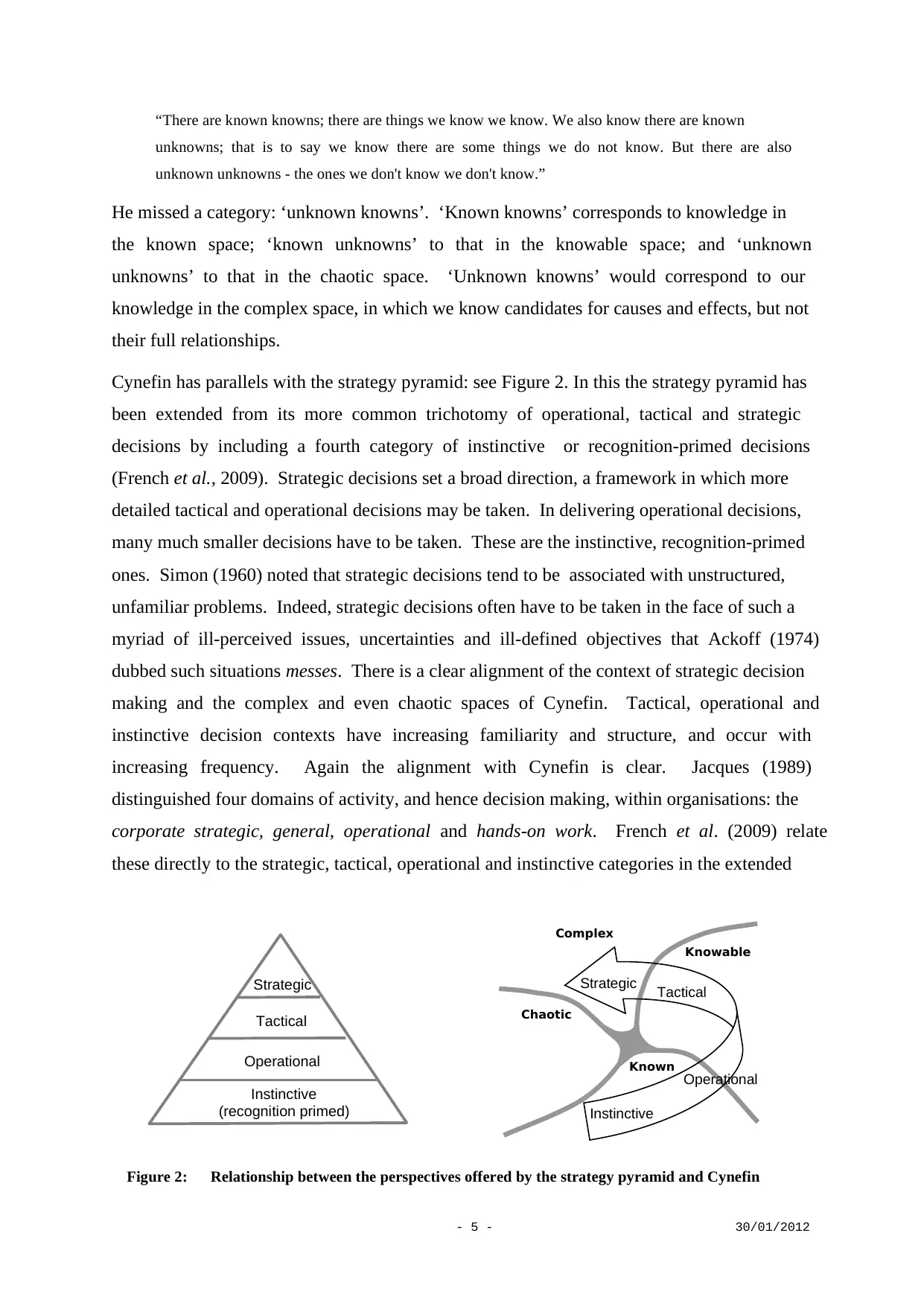

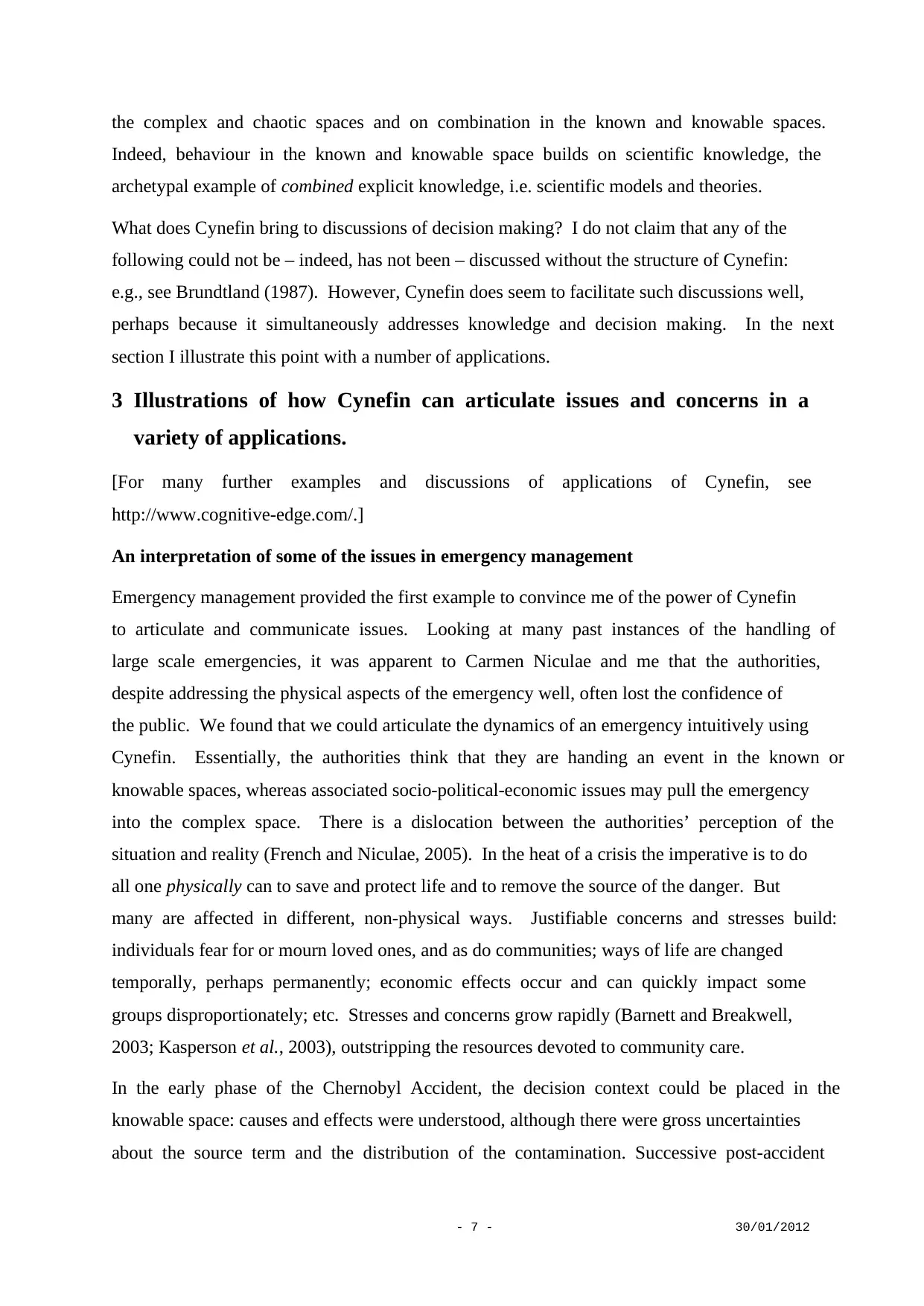

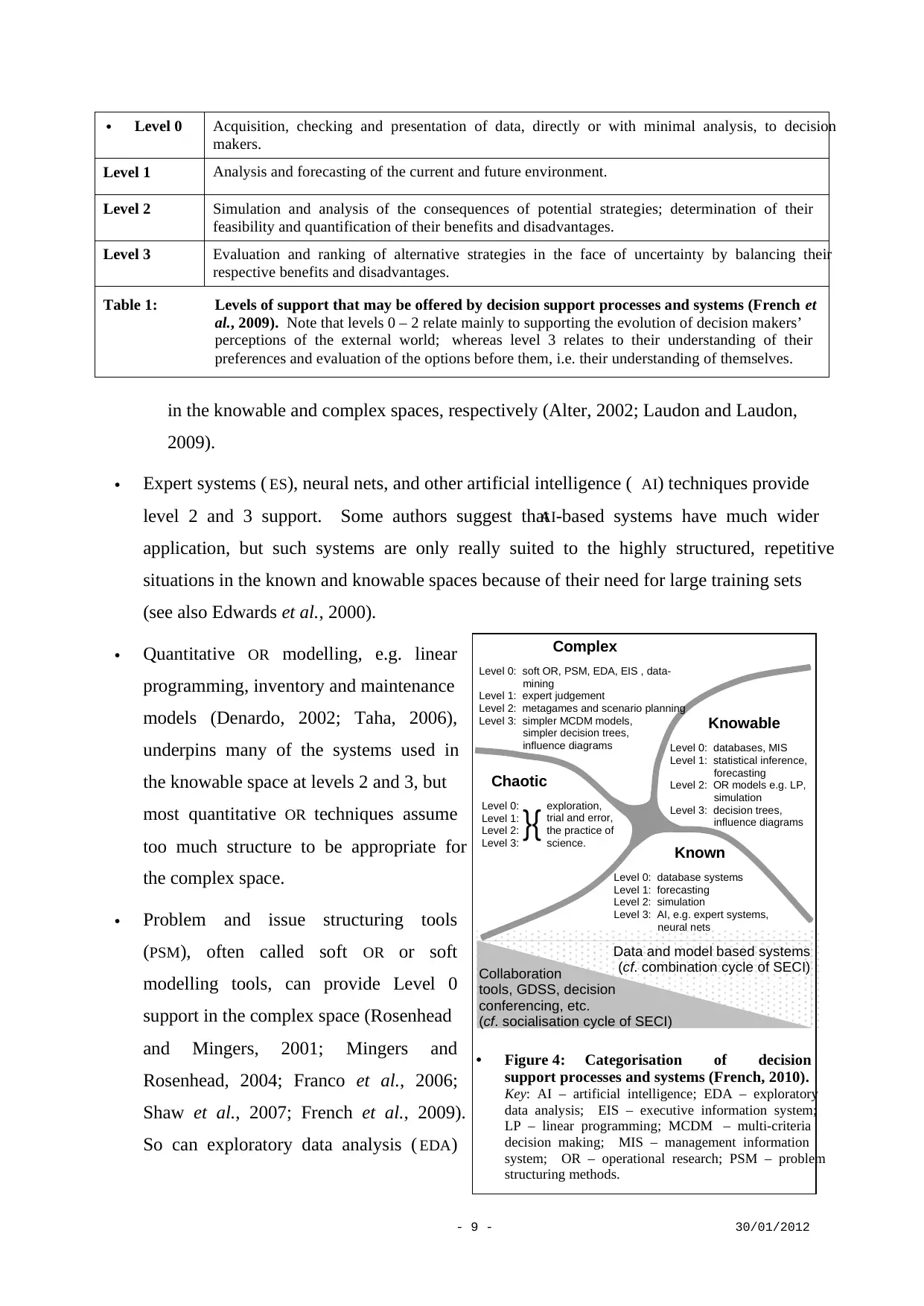

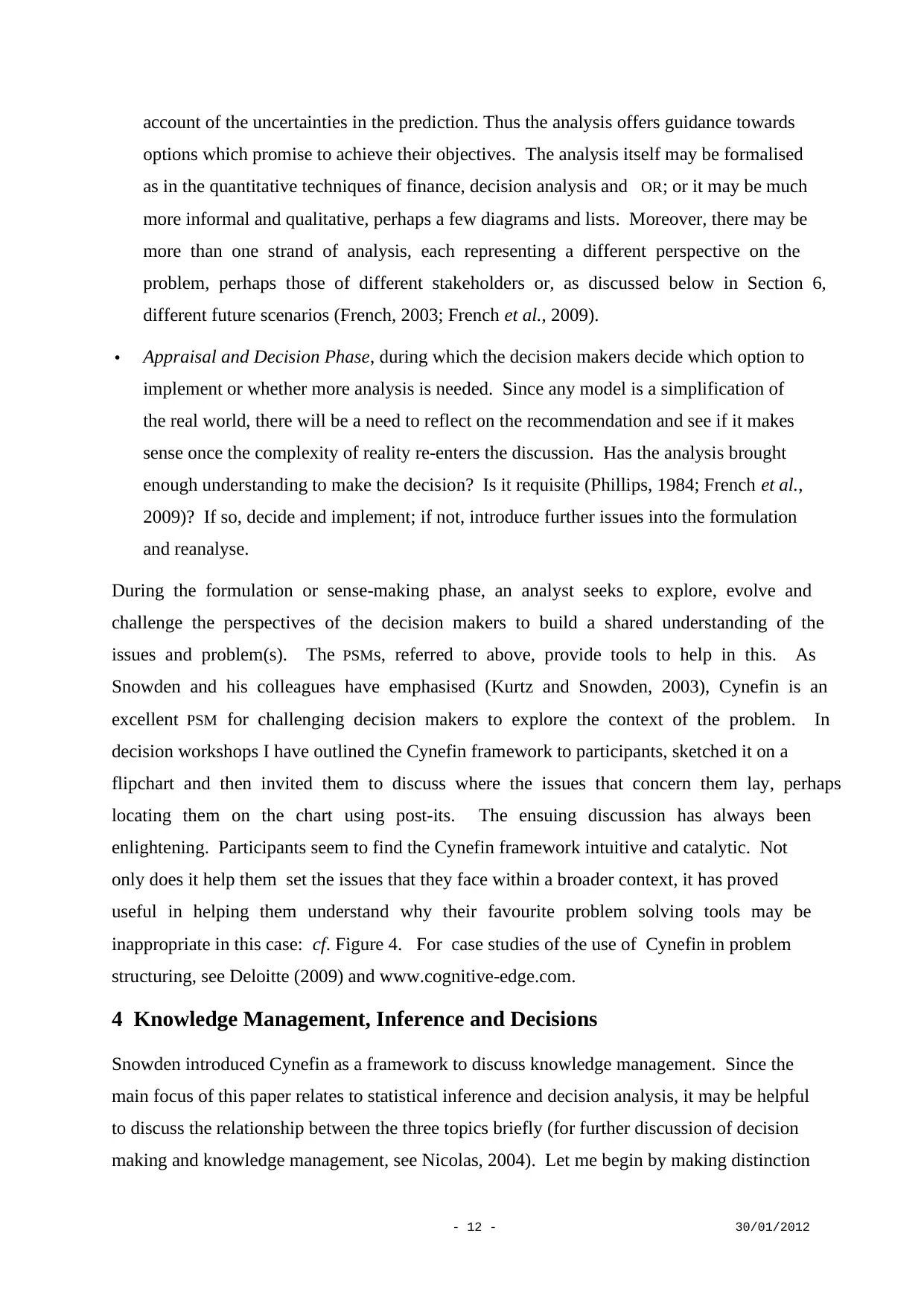

Categorisation of decision support process and systems

To understand the appropriate use of decision analysis and support, one needs to categorise

decision support processes and systems according to the level of support provided and the

decision context (2009). French (2010) categorises the level of support as in Table 1 and

uses Cynefin for decision contexts: see Figure 4. This suggests, for instance, that simulation

methods have a role to play in offering Level 2 support in the known and known spaces, but

are not relevant to the complex or chaotic spaces because in those cause and effect are not

understood sufficiently for simulation. Many similar points become apparent on mapping

other decision support processes and systems into this categorisation: e.g.

Databases and data mining provide Level 0 support over all the spaces, but are often

called management information systems ( MIS) or executive information systems ( EIS)

strategies, which continued to be based upon assumptions belonging to the known and

knowable spaces, focused on technical issues of radiation protection and neglected the

enormous social and cultural harm that the accident was causing (International Atomic

Energy Agency, 1991; Karaoglou et al., 1996; French et al., 2009). Thus, the context passed

into the complex space, but for a period was managed as if it were in the known or knowable

domains. This dislocation led to affected communities questioning and essentially rejecting

all the authorities’ protective and recovery measures. Eventually socio-economic issues were

addressed. For instance, the ETHOS project applied an approach which explored social and

cultural understandings along with more technical perspectives through multi-disciplinary

teams and strong involvement of the local population to rebuild a good overall quality of life

(Heriard Dubreuil et al., 1999).

The same issues can be discerned in the handling of many crises: e.g. Three Mile Island,

Mad-Cow Disease, and Hurricane Katrina (Niculae, 2005). Indeed, as I write this, BP is

being pilloried for its mismanagement of the Gulf Oil Spill and, admittedly before all the

evidence is published, I cannot help reflect that they may have myopically concentrated on

the technical issues of sealing the well-head, issues largely in the known and knowable

spaces, and missed the socio-economic and cultural impact, both actual and feared, that the

spill was creating, issues that clearly lie in the complex space. Such issues have led many to

argue for a more coherent socio-technical approach to emergencies in which the authorities

embrace and address all the public’s concerns throughout the response and not just recovery

phase (Fischhoff, 1995; Mumford, 2003; French et al., 2005; French and Niculae, 2005).

Categorisation of decision support process and systems

To understand the appropriate use of decision analysis and support, one needs to categorise

decision support processes and systems according to the level of support provided and the

decision context (2009). French (2010) categorises the level of support as in Table 1 and

uses Cynefin for decision contexts: see Figure 4. This suggests, for instance, that simulation

methods have a role to play in offering Level 2 support in the known and known spaces, but

are not relevant to the complex or chaotic spaces because in those cause and effect are not

understood sufficiently for simulation. Many similar points become apparent on mapping

other decision support processes and systems into this categorisation: e.g.

Databases and data mining provide Level 0 support over all the spaces, but are often

called management information systems ( MIS) or executive information systems ( EIS)

- 9 - 30/01/2012

in the knowable and complex spaces, respectively (Alter, 2002; Laudon and Laudon,

2009).

Expert systems ( ES), neural nets, and other artificial intelligence ( AI) techniques provide

level 2 and 3 support. Some authors suggest thatAI-based systems have much wider

application, but such systems are only really suited to the highly structured, repetitive

situations in the known and knowable spaces because of their need for large training sets

(see also Edwards et al., 2000).

Quantitative OR modelling, e.g. linear

programming, inventory and maintenance

models (Denardo, 2002; Taha, 2006),

underpins many of the systems used in

the knowable space at levels 2 and 3, but

most quantitative OR techniques assume

too much structure to be appropriate for

the complex space.

Problem and issue structuring tools

(PSM), often called soft OR or soft

modelling tools, can provide Level 0

support in the complex space (Rosenhead

and Mingers, 2001; Mingers and

Rosenhead, 2004; Franco et al., 2006;

Shaw et al., 2007; French et al., 2009).

So can exploratory data analysis ( EDA)

Chaotic

Level 0:

Level 1:

Level 2:

Level 3:

exploration,

trial and error,

the practice of

science.

}{ Known

Level 0: database systems

Level 1: forecasting

Level 2: simulation

Level 3: AI, e.g. expert systems,

neural nets

Knowable

Level 0: databases, MIS

Level 1: statistical inference,

forecasting

Level 2: OR models e.g. LP,

simulation

Level 3: decision trees,

influence diagrams

Complex

Level 0: soft OR, PSM, EDA, EIS , data-

mining

Level 1: expert judgement

Level 2: metagames and scenario planning

Level 3: simpler MCDM models,

simpler decision trees,

influence diagrams

Data and model based systems

(cf. combination cycle of SECI)Collaboration

tools, GDSS, decision

conferencing, etc.

(cf. socialisation cycle of SECI)

Figure 4: Categorisation of decision

support processes and systems (French, 2010).

Key: AI – artificial intelligence; EDA – exploratory

data analysis; EIS – executive information system;

LP – linear programming; MCDM – multi-criteria

decision making; MIS – management information

system; OR – operational research; PSM – problem

structuring methods.

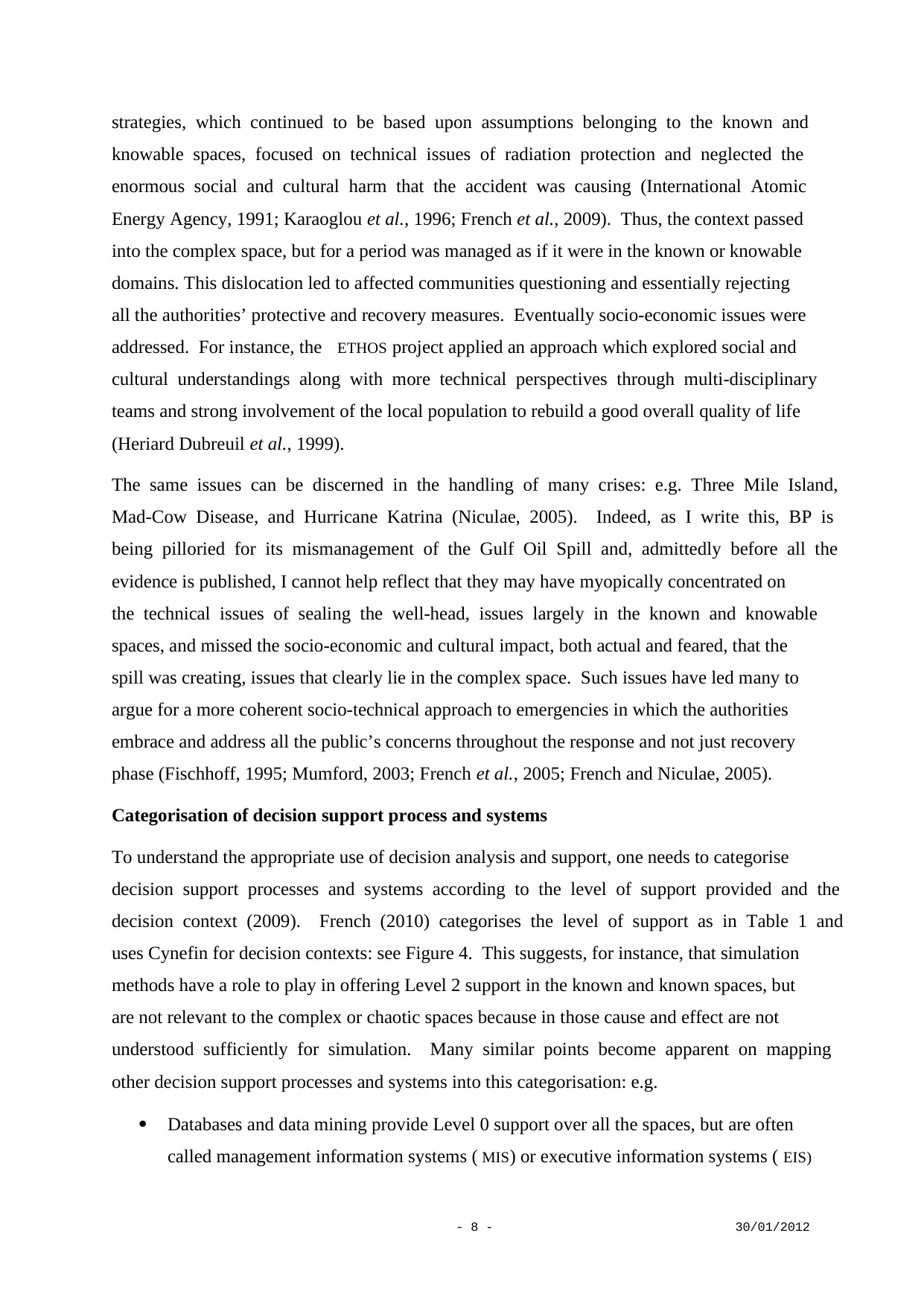

Level 0 Acquisition, checking and presentation of data, directly or with minimal analysis, to decision

makers.

Level 1 Analysis and forecasting of the current and future environment.

Level 2 Simulation and analysis of the consequences of potential strategies; determination of their

feasibility and quantification of their benefits and disadvantages.

Level 3 Evaluation and ranking of alternative strategies in the face of uncertainty by balancing their

respective benefits and disadvantages.

Table 1: Levels of support that may be offered by decision support processes and systems (French et

al., 2009). Note that levels 0 – 2 relate mainly to supporting the evolution of decision makers’

perceptions of the external world; whereas level 3 relates to their understanding of their

preferences and evaluation of the options before them, i.e. their understanding of themselves.

in the knowable and complex spaces, respectively (Alter, 2002; Laudon and Laudon,

2009).

Expert systems ( ES), neural nets, and other artificial intelligence ( AI) techniques provide

level 2 and 3 support. Some authors suggest thatAI-based systems have much wider

application, but such systems are only really suited to the highly structured, repetitive

situations in the known and knowable spaces because of their need for large training sets

(see also Edwards et al., 2000).

Quantitative OR modelling, e.g. linear

programming, inventory and maintenance

models (Denardo, 2002; Taha, 2006),

underpins many of the systems used in

the knowable space at levels 2 and 3, but

most quantitative OR techniques assume

too much structure to be appropriate for

the complex space.

Problem and issue structuring tools

(PSM), often called soft OR or soft

modelling tools, can provide Level 0

support in the complex space (Rosenhead

and Mingers, 2001; Mingers and

Rosenhead, 2004; Franco et al., 2006;

Shaw et al., 2007; French et al., 2009).

So can exploratory data analysis ( EDA)

Chaotic

Level 0:

Level 1:

Level 2:

Level 3:

exploration,

trial and error,

the practice of

science.

}{ Known

Level 0: database systems

Level 1: forecasting

Level 2: simulation

Level 3: AI, e.g. expert systems,

neural nets

Knowable

Level 0: databases, MIS

Level 1: statistical inference,

forecasting

Level 2: OR models e.g. LP,

simulation

Level 3: decision trees,

influence diagrams

Complex

Level 0: soft OR, PSM, EDA, EIS , data-

mining

Level 1: expert judgement

Level 2: metagames and scenario planning

Level 3: simpler MCDM models,

simpler decision trees,

influence diagrams

Data and model based systems

(cf. combination cycle of SECI)Collaboration

tools, GDSS, decision

conferencing, etc.

(cf. socialisation cycle of SECI)

Figure 4: Categorisation of decision

support processes and systems (French, 2010).

Key: AI – artificial intelligence; EDA – exploratory

data analysis; EIS – executive information system;

LP – linear programming; MCDM – multi-criteria

decision making; MIS – management information

system; OR – operational research; PSM – problem

structuring methods.

Level 0 Acquisition, checking and presentation of data, directly or with minimal analysis, to decision

makers.

Level 1 Analysis and forecasting of the current and future environment.

Level 2 Simulation and analysis of the consequences of potential strategies; determination of their

feasibility and quantification of their benefits and disadvantages.

Level 3 Evaluation and ranking of alternative strategies in the face of uncertainty by balancing their

respective benefits and disadvantages.

Table 1: Levels of support that may be offered by decision support processes and systems (French et

al., 2009). Note that levels 0 – 2 relate mainly to supporting the evolution of decision makers’

perceptions of the external world; whereas level 3 relates to their understanding of their

preferences and evaluation of the options before them, i.e. their understanding of themselves.

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

- 10 - 30/01/2012

(Tukey, 1977), which is often incorporated into EIS. Modern data mining techniques may

also be appropriate here (Hand et al., 2001; Korb and Nicholson, 2004). However,

automated though these procedures seem, they inevitably require judgement to separate

interesting and useful patterns from spurious ones; there is insufficient repetitivity for

more ‘objective’ techniques such as confirmatory significance testing.

For level 1 or level 2 support in the complex space one may use methodologies such as

scenario planning (Schoemaker, 1995; van der Heijden, 1996; Montibeller et al., 2006)

or metagames (Howard, 1971), methodologies that stimulate decision makers to

anticipate contingencies, and perhaps provide some simple qualitative consequence

modelling.

Level 3 support in the complex space may be provided by some simpler multi-criteria

decision making models (Belton and Stewart, 2002), such as multi-attribute value

analysis (Keeney and Raiffa, 1976), multi-criteria decision aids (Roy, 1996) or the

analytic hierarchy process (Saaty, 1980), which help decision makers explore their

values. Simple decision trees and influence diagrams may also be used to understand

some of the broad uncertainties facing decision makers. Further discussion is offered in

Section 6.

Finally remembering our discussion of Nonaka’s SECI cycle, decision making in the complex

and chaotic spaces on the left hand side of Cynefin will be based more on judgement, tacit

knowledge and exploration. Thus the primary activity in deliberating on possible strategies

will be the socialisation and sharing of tacit knowledge. Whereas in the known or knowable

spaces, decision making will be based more on explicit knowledge and the use of decision

models and data will be more common (Niculae et al., 2004). This suggests that in the

complex or chaotic spaces effective decision support needs to focus on facilitating

collaboration, whereas in the known or knowable spaces decision support systems will be

data- or model-based: see Figure 4.

Human behaviour, risk analysis and high reliability organisations

Recently, I was part of a research project to survey and critique human reliability analysis

(HRA) methodologies and consider their role in summative risk and reliability analyses

(Adhikari et al., 2008; French et al., 2010a). Our findings were not comforting. The

empirical evidence is that human behaviour, not necessarily erroneous behaviour, is involved

in something like 75% of all systemic failures. Yet currentHRA methodologies lack the

(Tukey, 1977), which is often incorporated into EIS. Modern data mining techniques may

also be appropriate here (Hand et al., 2001; Korb and Nicholson, 2004). However,

automated though these procedures seem, they inevitably require judgement to separate

interesting and useful patterns from spurious ones; there is insufficient repetitivity for

more ‘objective’ techniques such as confirmatory significance testing.

For level 1 or level 2 support in the complex space one may use methodologies such as

scenario planning (Schoemaker, 1995; van der Heijden, 1996; Montibeller et al., 2006)

or metagames (Howard, 1971), methodologies that stimulate decision makers to

anticipate contingencies, and perhaps provide some simple qualitative consequence

modelling.

Level 3 support in the complex space may be provided by some simpler multi-criteria

decision making models (Belton and Stewart, 2002), such as multi-attribute value

analysis (Keeney and Raiffa, 1976), multi-criteria decision aids (Roy, 1996) or the

analytic hierarchy process (Saaty, 1980), which help decision makers explore their

values. Simple decision trees and influence diagrams may also be used to understand

some of the broad uncertainties facing decision makers. Further discussion is offered in

Section 6.

Finally remembering our discussion of Nonaka’s SECI cycle, decision making in the complex

and chaotic spaces on the left hand side of Cynefin will be based more on judgement, tacit

knowledge and exploration. Thus the primary activity in deliberating on possible strategies

will be the socialisation and sharing of tacit knowledge. Whereas in the known or knowable

spaces, decision making will be based more on explicit knowledge and the use of decision

models and data will be more common (Niculae et al., 2004). This suggests that in the

complex or chaotic spaces effective decision support needs to focus on facilitating

collaboration, whereas in the known or knowable spaces decision support systems will be

data- or model-based: see Figure 4.

Human behaviour, risk analysis and high reliability organisations

Recently, I was part of a research project to survey and critique human reliability analysis

(HRA) methodologies and consider their role in summative risk and reliability analyses

(Adhikari et al., 2008; French et al., 2010a). Our findings were not comforting. The

empirical evidence is that human behaviour, not necessarily erroneous behaviour, is involved

in something like 75% of all systemic failures. Yet currentHRA methodologies lack the

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

- 11 - 30/01/2012

sophistication to model current understandings of human behaviour, particularly in relation to

the correlations and dependencies that it can introduce into systems. Modelling approaches

used in HRA tend to be focussed on easily describable sequential, low-level tasks, i.e. ones in

the known space in which the operators tend to use recognition-primed decision making. But

such tasks are seldom the initiators of systemic failures, which almost invariably involve the

occurrence of and higher-level responses to unexpected, infrequent events in the complex

space, for which operators need problem solving and decision making skills. Moreover, such

high level responses can affect many parts of the system, correlating events. In other words,

the empirical base of HRA is inappropriate to many of the behaviours in systemic failures.

Our research found that Cynefin was an effective in articulating such issues, providing a

framework to discuss the applicability of different HRA methodologies (see also Deloitte,

2009). Further, we also suggested that risk and reliability studies should use Cynefin to

categorise the various contexts of human activity within a system before beginning any HRA.

We also considered high reliability organisation ( HRO) theory as part of our studies. Again

Cynefin offered an effective way of articulating a concern. Early HRO theory drew on

examples such as carrier flight deck operations to provide its empirical base and then

extrapolated its thinking to risk and crisis management in contexts such as Bhopal and

Chernobyl (see, e.g, Weick, 1987). Yet this moves from repetitive contexts in Cynefin’s

known space to unique contexts in the complex or chaotic spaces. High reliability in known

contexts is likely to be based upon agreed single perspective science – a single shared mental

model; whereas in complex contexts high reliability organisations need to manage multiple

perspectives and families of shared mental models.

Cynefin, sense-making and problem structuring

At its simplest the decision analysis cycle involves three phases (French et al., 2009):

Formulation or Sense-making Phase, during which the problem, issues, objectives

uncertainties and options are identified and formulated. This phase is much more visible

in the knowable and complex spaces. In the known space, the problems repeat so often

that they were formulated long ago and sense-making becomes a matter of recognition,

as acknowledged in term ‘recognition-primed decision making’.

Analysis Phase, during which the issues, objectives, uncertainties and options are

modelled and analysed. This involves predicting the consequences of each possible

option in terms of their success in achieving the decision makers’ objectives, taking

sophistication to model current understandings of human behaviour, particularly in relation to

the correlations and dependencies that it can introduce into systems. Modelling approaches

used in HRA tend to be focussed on easily describable sequential, low-level tasks, i.e. ones in

the known space in which the operators tend to use recognition-primed decision making. But

such tasks are seldom the initiators of systemic failures, which almost invariably involve the

occurrence of and higher-level responses to unexpected, infrequent events in the complex

space, for which operators need problem solving and decision making skills. Moreover, such

high level responses can affect many parts of the system, correlating events. In other words,

the empirical base of HRA is inappropriate to many of the behaviours in systemic failures.

Our research found that Cynefin was an effective in articulating such issues, providing a

framework to discuss the applicability of different HRA methodologies (see also Deloitte,

2009). Further, we also suggested that risk and reliability studies should use Cynefin to

categorise the various contexts of human activity within a system before beginning any HRA.

We also considered high reliability organisation ( HRO) theory as part of our studies. Again

Cynefin offered an effective way of articulating a concern. Early HRO theory drew on

examples such as carrier flight deck operations to provide its empirical base and then

extrapolated its thinking to risk and crisis management in contexts such as Bhopal and

Chernobyl (see, e.g, Weick, 1987). Yet this moves from repetitive contexts in Cynefin’s

known space to unique contexts in the complex or chaotic spaces. High reliability in known

contexts is likely to be based upon agreed single perspective science – a single shared mental

model; whereas in complex contexts high reliability organisations need to manage multiple

perspectives and families of shared mental models.

Cynefin, sense-making and problem structuring

At its simplest the decision analysis cycle involves three phases (French et al., 2009):

Formulation or Sense-making Phase, during which the problem, issues, objectives

uncertainties and options are identified and formulated. This phase is much more visible

in the knowable and complex spaces. In the known space, the problems repeat so often

that they were formulated long ago and sense-making becomes a matter of recognition,

as acknowledged in term ‘recognition-primed decision making’.

Analysis Phase, during which the issues, objectives, uncertainties and options are

modelled and analysed. This involves predicting the consequences of each possible

option in terms of their success in achieving the decision makers’ objectives, taking

- 12 - 30/01/2012

account of the uncertainties in the prediction. Thus the analysis offers guidance towards

options which promise to achieve their objectives. The analysis itself may be formalised

as in the quantitative techniques of finance, decision analysis and OR; or it may be much

more informal and qualitative, perhaps a few diagrams and lists. Moreover, there may be

more than one strand of analysis, each representing a different perspective on the

problem, perhaps those of different stakeholders or, as discussed below in Section 6,

different future scenarios (French, 2003; French et al., 2009).

Appraisal and Decision Phase, during which the decision makers decide which option to

implement or whether more analysis is needed. Since any model is a simplification of

the real world, there will be a need to reflect on the recommendation and see if it makes

sense once the complexity of reality re-enters the discussion. Has the analysis brought

enough understanding to make the decision? Is it requisite (Phillips, 1984; French et al.,

2009)? If so, decide and implement; if not, introduce further issues into the formulation

and reanalyse.

During the formulation or sense-making phase, an analyst seeks to explore, evolve and

challenge the perspectives of the decision makers to build a shared understanding of the

issues and problem(s). The PSMs, referred to above, provide tools to help in this. As

Snowden and his colleagues have emphasised (Kurtz and Snowden, 2003), Cynefin is an

excellent PSM for challenging decision makers to explore the context of the problem. In

decision workshops I have outlined the Cynefin framework to participants, sketched it on a

flipchart and then invited them to discuss where the issues that concern them lay, perhaps

locating them on the chart using post-its. The ensuing discussion has always been

enlightening. Participants seem to find the Cynefin framework intuitive and catalytic. Not

only does it help them set the issues that they face within a broader context, it has proved

useful in helping them understand why their favourite problem solving tools may be

inappropriate in this case: cf. Figure 4. For case studies of the use of Cynefin in problem

structuring, see Deloitte (2009) and www.cognitive-edge.com.

4 Knowledge Management, Inference and Decisions

Snowden introduced Cynefin as a framework to discuss knowledge management. Since the

main focus of this paper relates to statistical inference and decision analysis, it may be helpful

to discuss the relationship between the three topics briefly (for further discussion of decision

making and knowledge management, see Nicolas, 2004). Let me begin by making distinction

account of the uncertainties in the prediction. Thus the analysis offers guidance towards

options which promise to achieve their objectives. The analysis itself may be formalised

as in the quantitative techniques of finance, decision analysis and OR; or it may be much

more informal and qualitative, perhaps a few diagrams and lists. Moreover, there may be

more than one strand of analysis, each representing a different perspective on the

problem, perhaps those of different stakeholders or, as discussed below in Section 6,

different future scenarios (French, 2003; French et al., 2009).

Appraisal and Decision Phase, during which the decision makers decide which option to

implement or whether more analysis is needed. Since any model is a simplification of

the real world, there will be a need to reflect on the recommendation and see if it makes

sense once the complexity of reality re-enters the discussion. Has the analysis brought

enough understanding to make the decision? Is it requisite (Phillips, 1984; French et al.,

2009)? If so, decide and implement; if not, introduce further issues into the formulation

and reanalyse.

During the formulation or sense-making phase, an analyst seeks to explore, evolve and

challenge the perspectives of the decision makers to build a shared understanding of the

issues and problem(s). The PSMs, referred to above, provide tools to help in this. As

Snowden and his colleagues have emphasised (Kurtz and Snowden, 2003), Cynefin is an

excellent PSM for challenging decision makers to explore the context of the problem. In

decision workshops I have outlined the Cynefin framework to participants, sketched it on a

flipchart and then invited them to discuss where the issues that concern them lay, perhaps

locating them on the chart using post-its. The ensuing discussion has always been

enlightening. Participants seem to find the Cynefin framework intuitive and catalytic. Not

only does it help them set the issues that they face within a broader context, it has proved

useful in helping them understand why their favourite problem solving tools may be

inappropriate in this case: cf. Figure 4. For case studies of the use of Cynefin in problem

structuring, see Deloitte (2009) and www.cognitive-edge.com.

4 Knowledge Management, Inference and Decisions

Snowden introduced Cynefin as a framework to discuss knowledge management. Since the

main focus of this paper relates to statistical inference and decision analysis, it may be helpful

to discuss the relationship between the three topics briefly (for further discussion of decision

making and knowledge management, see Nicolas, 2004). Let me begin by making distinction

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

1 out of 28

Your All-in-One AI-Powered Toolkit for Academic Success.

+13062052269

info@desklib.com

Available 24*7 on WhatsApp / Email

![[object Object]](/_next/static/media/star-bottom.7253800d.svg)

Unlock your academic potential

Copyright © 2020–2026 A2Z Services. All Rights Reserved. Developed and managed by ZUCOL.