2018 CSE3/4 VIS Assignment: Eigenface Techniques for Face Recognition

VerifiedAdded on 2021/06/15

|15

|3865

|113

Practical Assignment

AI Summary

This assignment explores the application of eigenface techniques for face recognition. The solution details the implementation using MATLAB, including image resizing, determining parameters (K1 and K2), and analyzing classifier output. The process involves training and testing datasets, with experiments conducted on student images to evaluate the system's ability to recognize faces under different moods. The report presents the results in tables and figures, discussing system performance, including the impact of neural networks, nearest neighbor techniques, and the effect of varying image sizes. The provided MATLAB code demonstrates the steps involved in face detection, feature extraction, and classification, along with the analysis of the results. The student's solution includes the steps of reading the images, resizing the images, determining the values for K1 and K2 and the results from the classifier output and finally the discussion of the results and the system performance.

2018

UNIVERSITY AFFILIATION

2018 CSE3/4 VIS VISUAL INFORMATION SYSTEMS ASSIGNMENT

STUDENT NAME

UNIVERSITY AFFILIATION

2018 CSE3/4 VIS VISUAL INFORMATION SYSTEMS ASSIGNMENT

STUDENT NAME

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

TABLE OF CONTENTS

BASIC OVERVIEW.......................................................................................................................2

Eigenface techniques for face recognition...................................................................................2

RESULTS AND OBSERVATIONS: MATLAB IMPLEMENTATION.......................................3

PROCEDURE:.............................................................................................................................3

Experiment and results.................................................................................................................4

Part 1: Resizing the images stored in the training dataset and test dataset..................................4

Part 2: Determining K1 and K2...................................................................................................6

Part 3: Training and Test dataset results from classifier output...................................................7

DISCUSSION..................................................................................................................................7

System performance.....................................................................................................................7

REFERENCES................................................................................................................................9

1

BASIC OVERVIEW.......................................................................................................................2

Eigenface techniques for face recognition...................................................................................2

RESULTS AND OBSERVATIONS: MATLAB IMPLEMENTATION.......................................3

PROCEDURE:.............................................................................................................................3

Experiment and results.................................................................................................................4

Part 1: Resizing the images stored in the training dataset and test dataset..................................4

Part 2: Determining K1 and K2...................................................................................................6

Part 3: Training and Test dataset results from classifier output...................................................7

DISCUSSION..................................................................................................................................7

System performance.....................................................................................................................7

REFERENCES................................................................................................................................9

1

BASIC OVERVIEW

Eigenface techniques for face recognition

The modern security systems incorporate the use of facial recognition as a way of enabling

authorization. This can be achieved in personal identification for instance, at the immigration

office or border points, for human computer interactions such as phone unlock using facial

recognition, and major security systems for instance, in offices or large residential complex or

penthouses (Navarrete & Ruiz-del, 2002). Every human being has very complex,

multidimensional, and meaningful facial unique attributes that differentiate them from others.

This makes the facial recognition process more difficult to implement in security systems. Some

of the local features that the recognition process focusses on are the eyes, nose, and mouth before

extracting the feature of the whole face (Charalampos & Ilias, 2010).

There are a number of approaches defined to enable the facial recognition by systems. For

instance, using artificial intelligence, one can create neural networks and self-organizing maps,

SOMs, where the user trains some images and uses test images to test the recognition. Other

alternatives to this approach are content-based image retrieval, principal component analysis and

the relevance feedback (Turk & Pentland). There are three stages performed in the process of

facial recognition such as the face location detection, feature extraction, and facial image

classification. The face recognition is done using eigenface algorithm (Emad, Tamer, &

AbdelMonem). The face images are projected into a feature space that best encodes the variation

among the known face images. The face space is well expounded by the eigenfaces, which are

the eigenvectors of the set of faces. The eigenface algorithm computes the average face, v. The

algorithm collects the difference between training images and the average face (Ruiz-del-Solar &

and Navarrete, 2002). The differences are saved in a matrix where M is the number of pixels and

N is the number of stored or trained images. The algorithm for eigenfaces is denoted by the

equation,

A= [ u1

1−v ,… , un

1 −v , … ,u1

p−v , … , un

p−v ]

The eigenvectors of the covariance matrix C are used to give the final eigenfaces. This is done

using powerful tools with a stable runtime such as MATLAB R2017a. Therefore,

C= A AT

2

Eigenface techniques for face recognition

The modern security systems incorporate the use of facial recognition as a way of enabling

authorization. This can be achieved in personal identification for instance, at the immigration

office or border points, for human computer interactions such as phone unlock using facial

recognition, and major security systems for instance, in offices or large residential complex or

penthouses (Navarrete & Ruiz-del, 2002). Every human being has very complex,

multidimensional, and meaningful facial unique attributes that differentiate them from others.

This makes the facial recognition process more difficult to implement in security systems. Some

of the local features that the recognition process focusses on are the eyes, nose, and mouth before

extracting the feature of the whole face (Charalampos & Ilias, 2010).

There are a number of approaches defined to enable the facial recognition by systems. For

instance, using artificial intelligence, one can create neural networks and self-organizing maps,

SOMs, where the user trains some images and uses test images to test the recognition. Other

alternatives to this approach are content-based image retrieval, principal component analysis and

the relevance feedback (Turk & Pentland). There are three stages performed in the process of

facial recognition such as the face location detection, feature extraction, and facial image

classification. The face recognition is done using eigenface algorithm (Emad, Tamer, &

AbdelMonem). The face images are projected into a feature space that best encodes the variation

among the known face images. The face space is well expounded by the eigenfaces, which are

the eigenvectors of the set of faces. The eigenface algorithm computes the average face, v. The

algorithm collects the difference between training images and the average face (Ruiz-del-Solar &

and Navarrete, 2002). The differences are saved in a matrix where M is the number of pixels and

N is the number of stored or trained images. The algorithm for eigenfaces is denoted by the

equation,

A= [ u1

1−v ,… , un

1 −v , … ,u1

p−v , … , un

p−v ]

The eigenvectors of the covariance matrix C are used to give the final eigenfaces. This is done

using powerful tools with a stable runtime such as MATLAB R2017a. Therefore,

C= A AT

2

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

There are N-1 meaningful eigenvectors, when the number of data points is smaller than the

dimensions. To get a faster response on the value of the eigenvectors of C,

L= AT A

The training face images and the new face images are represented as a linear combination of the

eigenfaces. For instance, a face image, u, can be represented as,

u=∑

i

ai ϕi

The eigenvectors are usually orthogonal to the eigenvalues such that,

ai=uT ϕi

The PCA seeks directions that are efficient for the representation of the data and seeks to

maximize the total scatter. The PCA reduces the dimension of the data and speeds up the

computational time. The time taken to perform facial recognition is important especially in

implementation in the actual environment such that the systems should use the least time to

detect a face (Tolba, El-Baz, & El-Harby, 2006). It should be close to real-time.

RESULTS AND OBSERVATIONS: MATLAB IMPLEMENTATION

The task aims at using the training and testing data to identify and extract face images from the

image saved. This is done when the algorithm pulls similar images from the database with a set

of 30 images from the training data and 20 images from the test data.

PROCEDURE:

(i) The training set is obtained as the 30 images and the test set has 20 images all defined

in set folders. The algorithm is loaded and the eigenfaces are calculated using the

PCA projections. These projections define the eigenspace.

(ii) The new face is checked by using the test data and the weight of the connections or

links is computed. The system, at this point, determines if the image used is a face.

When the algorithm identifies the new image as a face, the weight pattern is grouped

under the known or unknown (Hongliang, Qingshan, Xiaoou, & Hanqing, 2005).

3

dimensions. To get a faster response on the value of the eigenvectors of C,

L= AT A

The training face images and the new face images are represented as a linear combination of the

eigenfaces. For instance, a face image, u, can be represented as,

u=∑

i

ai ϕi

The eigenvectors are usually orthogonal to the eigenvalues such that,

ai=uT ϕi

The PCA seeks directions that are efficient for the representation of the data and seeks to

maximize the total scatter. The PCA reduces the dimension of the data and speeds up the

computational time. The time taken to perform facial recognition is important especially in

implementation in the actual environment such that the systems should use the least time to

detect a face (Tolba, El-Baz, & El-Harby, 2006). It should be close to real-time.

RESULTS AND OBSERVATIONS: MATLAB IMPLEMENTATION

The task aims at using the training and testing data to identify and extract face images from the

image saved. This is done when the algorithm pulls similar images from the database with a set

of 30 images from the training data and 20 images from the test data.

PROCEDURE:

(i) The training set is obtained as the 30 images and the test set has 20 images all defined

in set folders. The algorithm is loaded and the eigenfaces are calculated using the

PCA projections. These projections define the eigenspace.

(ii) The new face is checked by using the test data and the weight of the connections or

links is computed. The system, at this point, determines if the image used is a face.

When the algorithm identifies the new image as a face, the weight pattern is grouped

under the known or unknown (Hongliang, Qingshan, Xiaoou, & Hanqing, 2005).

3

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

(iii) In practice, the grouping would be used to grant or deny authorization or access to a

building. The image should match an existing image in the database for it to be

successfully recognized (Belhumeur, Hespanha, & Kriegman).

(iv) The learning process during training seeks to pick the unknown faces and incorporate

them in the database. For instance, during the registration of a new staff member in a

company, the system learns and it adds the unknown face to its database alongside

some description to grant or deny access to the given face (Chellappa, Wilson, &

Sirohey).

Experiment and results.

The data is obtained from the lab experiments with student images. The training set contains 10

students with 3 moods each. The resulting matrix is 10x3 images while the test set contains 10x2

images where the 10 test subjects have 2 moods each. The designer creates a face database, the

system generates the eigenfaces database and creates the top eigenfaces (Teofilo, Rogerio, &

Roberto, 2000). The test image is used to show if the system can recognize the face from the

already setup database.

(i) The first attempt seeks to classify the average coefficient for each person such that the

new face is compared to the closest average. The recognition accuracy increases with

more learning procedures.

(ii) The eigenfaces are useful during training to set the average value of recognition but

do not help much afterwards.

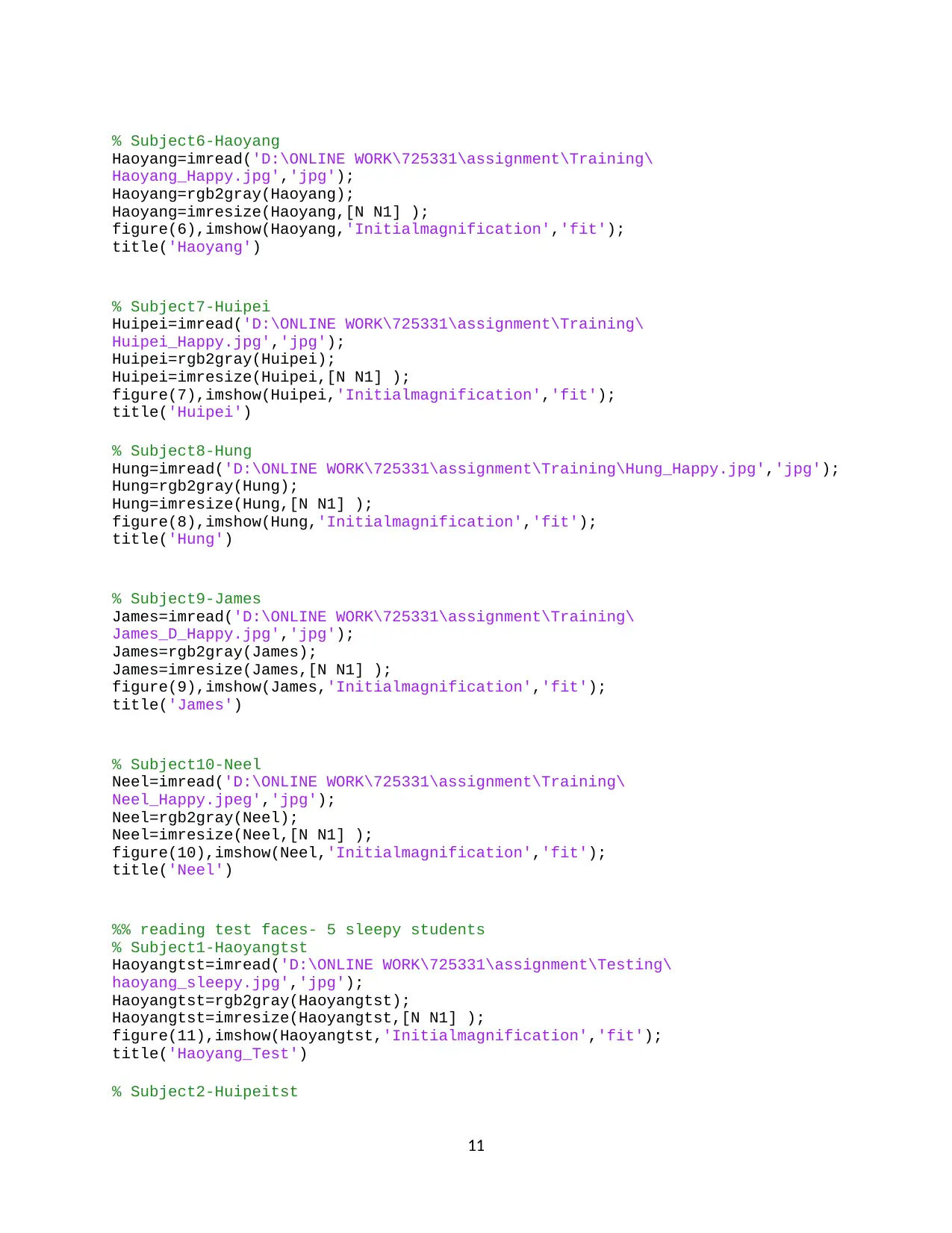

Part 1: Resizing the images stored in the training dataset and test dataset

%% Part 1: Resizing and Reading Images

% Subject1-Dhara

dhara=imread('D:\ONLINE WORK\725331\assignment\Training\Dhara_Happy.jpg','jpg');

dhara=rgb2gray(dhara);

dhara=imresize(dhara,[N N1] );

figure(1),imshow(dhara,'Initialmagnification','fit');

title('dhara')

% Subject2-Diksha

diksha=imread('D:\ONLINE WORK\725331\assignment\Training\Diksha_Happy.jpg','jpg');

diksha=rgb2gray(diksha);

diksha=imresize(diksha,[N N1] );

figure(2),imshow(diksha,'Initialmagnification','fit');

4

building. The image should match an existing image in the database for it to be

successfully recognized (Belhumeur, Hespanha, & Kriegman).

(iv) The learning process during training seeks to pick the unknown faces and incorporate

them in the database. For instance, during the registration of a new staff member in a

company, the system learns and it adds the unknown face to its database alongside

some description to grant or deny access to the given face (Chellappa, Wilson, &

Sirohey).

Experiment and results.

The data is obtained from the lab experiments with student images. The training set contains 10

students with 3 moods each. The resulting matrix is 10x3 images while the test set contains 10x2

images where the 10 test subjects have 2 moods each. The designer creates a face database, the

system generates the eigenfaces database and creates the top eigenfaces (Teofilo, Rogerio, &

Roberto, 2000). The test image is used to show if the system can recognize the face from the

already setup database.

(i) The first attempt seeks to classify the average coefficient for each person such that the

new face is compared to the closest average. The recognition accuracy increases with

more learning procedures.

(ii) The eigenfaces are useful during training to set the average value of recognition but

do not help much afterwards.

Part 1: Resizing the images stored in the training dataset and test dataset

%% Part 1: Resizing and Reading Images

% Subject1-Dhara

dhara=imread('D:\ONLINE WORK\725331\assignment\Training\Dhara_Happy.jpg','jpg');

dhara=rgb2gray(dhara);

dhara=imresize(dhara,[N N1] );

figure(1),imshow(dhara,'Initialmagnification','fit');

title('dhara')

% Subject2-Diksha

diksha=imread('D:\ONLINE WORK\725331\assignment\Training\Diksha_Happy.jpg','jpg');

diksha=rgb2gray(diksha);

diksha=imresize(diksha,[N N1] );

figure(2),imshow(diksha,'Initialmagnification','fit');

4

title('dhara')

% Subject3-Eric

Eric=imread('D:\ONLINE WORK\725331\assignment\Training\Eric_Happy.jpg','jpg');

Eric=rgb2gray(Eric);

Eric=imresize(Eric,[N N1] );

figure(3),imshow(Eric,'Initialmagnification','fit');

title('Eric')

% Subject4-Gauta

Gauta=imread('D:\ONLINE WORK\725331\assignment\Training\Gautam_Happy.jpg','jpg');

Gauta=rgb2gray(Gauta);

Gauta=imresize(Gauta,[N N1] );

figure(4),imshow(Gauta,'Initialmagnification','fit');

title('Gauta')

% Subject5-Ghaida

Ghaida=imread('D:\ONLINE WORK\725331\assignment\Training\Ghaida_Happy.jpg','jpg');

Ghaida=rgb2gray(Ghaida);

Ghaida=imresize(Ghaida,[N N1] );

figure(5),imshow(Ghaida,'Initialmagnification','fit');

title('Ghaida')

% Subject6-Haoyang

Haoyang=imread('D:\ONLINE WORK\725331\assignment\Training\Haoyang_Happy.jpg','jpg');

Haoyang=rgb2gray(Haoyang);

Haoyang=imresize(Haoyang,[N N1] );

figure(6),imshow(Haoyang,'Initialmagnification','fit');

title('Haoyang')

% Subject7-Huipei

Huipei=imread('D:\ONLINE WORK\725331\assignment\Training\Huipei_Happy.jpg','jpg');

Huipei=rgb2gray(Huipei);

Huipei=imresize(Huipei,[N N1] );

figure(7),imshow(Huipei,'Initialmagnification','fit');

title('Huipei')

% Subject8-Hung

Hung=imread('D:\ONLINE WORK\725331\assignment\Training\Hung_Happy.jpg','jpg');

Hung=rgb2gray(Hung);

Hung=imresize(Hung,[N N1] );

figure(8),imshow(Hung,'Initialmagnification','fit');

title('Hung')

5

% Subject3-Eric

Eric=imread('D:\ONLINE WORK\725331\assignment\Training\Eric_Happy.jpg','jpg');

Eric=rgb2gray(Eric);

Eric=imresize(Eric,[N N1] );

figure(3),imshow(Eric,'Initialmagnification','fit');

title('Eric')

% Subject4-Gauta

Gauta=imread('D:\ONLINE WORK\725331\assignment\Training\Gautam_Happy.jpg','jpg');

Gauta=rgb2gray(Gauta);

Gauta=imresize(Gauta,[N N1] );

figure(4),imshow(Gauta,'Initialmagnification','fit');

title('Gauta')

% Subject5-Ghaida

Ghaida=imread('D:\ONLINE WORK\725331\assignment\Training\Ghaida_Happy.jpg','jpg');

Ghaida=rgb2gray(Ghaida);

Ghaida=imresize(Ghaida,[N N1] );

figure(5),imshow(Ghaida,'Initialmagnification','fit');

title('Ghaida')

% Subject6-Haoyang

Haoyang=imread('D:\ONLINE WORK\725331\assignment\Training\Haoyang_Happy.jpg','jpg');

Haoyang=rgb2gray(Haoyang);

Haoyang=imresize(Haoyang,[N N1] );

figure(6),imshow(Haoyang,'Initialmagnification','fit');

title('Haoyang')

% Subject7-Huipei

Huipei=imread('D:\ONLINE WORK\725331\assignment\Training\Huipei_Happy.jpg','jpg');

Huipei=rgb2gray(Huipei);

Huipei=imresize(Huipei,[N N1] );

figure(7),imshow(Huipei,'Initialmagnification','fit');

title('Huipei')

% Subject8-Hung

Hung=imread('D:\ONLINE WORK\725331\assignment\Training\Hung_Happy.jpg','jpg');

Hung=rgb2gray(Hung);

Hung=imresize(Hung,[N N1] );

figure(8),imshow(Hung,'Initialmagnification','fit');

title('Hung')

5

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

% Subject9-James

James=imread('D:\ONLINE WORK\725331\assignment\Training\James_D_Happy.jpg','jpg');

James=rgb2gray(James);

James=imresize(James,[N N1] );

figure(9),imshow(James,'Initialmagnification','fit');

title('James')

% Subject10-Neel

Neel=imread('D:\ONLINE WORK\725331\assignment\Training\Neel_Happy.jpeg','jpg');

Neel=rgb2gray(Neel);

Neel=imresize(Neel,[N N1] );

figure(10),imshow(Neel,'Initialmagnification','fit');

title('Neel')

Happy Students - Z Average

Average

Part 2: Determining K1 and K2

Table 1 Results for the test dataset (K1)

6

James=imread('D:\ONLINE WORK\725331\assignment\Training\James_D_Happy.jpg','jpg');

James=rgb2gray(James);

James=imresize(James,[N N1] );

figure(9),imshow(James,'Initialmagnification','fit');

title('James')

% Subject10-Neel

Neel=imread('D:\ONLINE WORK\725331\assignment\Training\Neel_Happy.jpeg','jpg');

Neel=rgb2gray(Neel);

Neel=imresize(Neel,[N N1] );

figure(10),imshow(Neel,'Initialmagnification','fit');

title('Neel')

Happy Students - Z Average

Average

Part 2: Determining K1 and K2

Table 1 Results for the test dataset (K1)

6

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

1-NN 3-NN 5-NN

40x30 size 39.0415 36.0745 45.6723

80x60 size 78.0830 72.1490 89.3447

Average 58.5622 54.1118 67.5085

Table 2 Results for the test dataset (K2)

1-NN 3-NN 5-NN

40x30 size 43.3975 40.4305 50.0283

80x60 size 82.439 76.505 93.7007

Average 62.9182 58.4678 71.8645

Part 3: Training and Test dataset results from classifier output

eigenfaces

DISCUSSION

System performance

The best rates that can be used in a high performing system are such as,

training set → 95 %

test set → 85 %

The neural networks model is good with training the data but it takes some time to perform the

training especially if the data set is too large. When the classification is performed using the

nearest neighbor technique, it takes more classification time. When the test case is chosen as

Dhara is surprised, the algorithm links the image to the original image of Dhara as taken during

training.

7

40x30 size 39.0415 36.0745 45.6723

80x60 size 78.0830 72.1490 89.3447

Average 58.5622 54.1118 67.5085

Table 2 Results for the test dataset (K2)

1-NN 3-NN 5-NN

40x30 size 43.3975 40.4305 50.0283

80x60 size 82.439 76.505 93.7007

Average 62.9182 58.4678 71.8645

Part 3: Training and Test dataset results from classifier output

eigenfaces

DISCUSSION

System performance

The best rates that can be used in a high performing system are such as,

training set → 95 %

test set → 85 %

The neural networks model is good with training the data but it takes some time to perform the

training especially if the data set is too large. When the classification is performed using the

nearest neighbor technique, it takes more classification time. When the test case is chosen as

Dhara is surprised, the algorithm links the image to the original image of Dhara as taken during

training.

7

test face Dhara

8

8

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

REFERENCES

Belhumeur, P., Hespanha, & Kriegman, D. (n.d.). Eigenfaces vs Fisherfaces. Recognition using

Class Specific Linear Proection.

Charalampos, D., & Ilias, M. (2010). A fast-mobile face Recognition System for Android OS

Based on Eigenfaces Decomposition. IFIP Advances in Information and Communication

Technology, 295-302.

Chellappa, R., Wilson, C. L., & Sirohey, C. (n.d.). Human and Machine recognition of faces: A

Survey. Proceedings of IEEE, 705-740.

Emad, B., Tamer, M., & AbdelMonem, W. A. (n.d.). A new image comparing technique for

content-based image retrieval.

Hongliang, i., Qingshan, L., Xiaoou, T., & Hanqing, L. (2005). Learning Local Descriptors for

Face Detection: Multimedia and Expo. IEEE International Conference on 06-06 2005;

ICME 2005, 928-931.

Navarrete, P., & Ruiz-del, S. (2002). Interactive Face Retrieval using Self-Organizing Maps.

International Joint Conference on Neural Networks-IJCNN 2002, 12-17.

Ruiz-del-Solar, J., & and Navarrete, P. (2002). Towards a Generalized Eigenspace-based Face

Recognition Framework. 4th Int. Workshop on Statistical Techniques in Pattern

Recognition, 6-9.

Teofilo, E. d., Rogerio, S. F., & Roberto, C. M. (2000). First steps toward performance

assessment of representation for Face Recognition Lecture notes in Artificial Intelligence.

Eigenfaces versus Eigeneyes, 197-206.

Tolba, A. S., El-Baz, A. H., & El-Harby, A. A. (2006). Face Recognition: A literature Review.

Internation Journal of Signal Processing, 2-5.

Turk, M., & Pentland, A. (n.d.). Eigenfaces for Recognition. Journal of Cognitive Neuroscience,

71-86.

9

Belhumeur, P., Hespanha, & Kriegman, D. (n.d.). Eigenfaces vs Fisherfaces. Recognition using

Class Specific Linear Proection.

Charalampos, D., & Ilias, M. (2010). A fast-mobile face Recognition System for Android OS

Based on Eigenfaces Decomposition. IFIP Advances in Information and Communication

Technology, 295-302.

Chellappa, R., Wilson, C. L., & Sirohey, C. (n.d.). Human and Machine recognition of faces: A

Survey. Proceedings of IEEE, 705-740.

Emad, B., Tamer, M., & AbdelMonem, W. A. (n.d.). A new image comparing technique for

content-based image retrieval.

Hongliang, i., Qingshan, L., Xiaoou, T., & Hanqing, L. (2005). Learning Local Descriptors for

Face Detection: Multimedia and Expo. IEEE International Conference on 06-06 2005;

ICME 2005, 928-931.

Navarrete, P., & Ruiz-del, S. (2002). Interactive Face Retrieval using Self-Organizing Maps.

International Joint Conference on Neural Networks-IJCNN 2002, 12-17.

Ruiz-del-Solar, J., & and Navarrete, P. (2002). Towards a Generalized Eigenspace-based Face

Recognition Framework. 4th Int. Workshop on Statistical Techniques in Pattern

Recognition, 6-9.

Teofilo, E. d., Rogerio, S. F., & Roberto, C. M. (2000). First steps toward performance

assessment of representation for Face Recognition Lecture notes in Artificial Intelligence.

Eigenfaces versus Eigeneyes, 197-206.

Tolba, A. S., El-Baz, A. H., & El-Harby, A. A. (2006). Face Recognition: A literature Review.

Internation Journal of Signal Processing, 2-5.

Turk, M., & Pentland, A. (n.d.). Eigenfaces for Recognition. Journal of Cognitive Neuroscience,

71-86.

9

Paraphrase This Document

Need a fresh take? Get an instant paraphrase of this document with our AI Paraphraser

APPENDIX

%% Student Registration Details

% Group Members and their respective IDs

close all

clc

N=40; %size of the image

N1=30;

M=10; % 4 student faces

%% Part 1: Resizing and Reading Images

% Subject1-Dhara

dhara=imread('D:\ONLINE WORK\725331\assignment\Training\

Dhara_Happy.jpg','jpg');

dhara=rgb2gray(dhara);

dhara=imresize(dhara,[N N1] );

figure(1),imshow(dhara,'Initialmagnification','fit');

title('dhara')

% Subject2-Diksha

diksha=imread('D:\ONLINE WORK\725331\assignment\Training\

Diksha_Happy.jpg','jpg');

diksha=rgb2gray(diksha);

diksha=imresize(diksha,[N N1] );

figure(2),imshow(diksha,'Initialmagnification','fit');

title('dhara')

% Subject3-Eric

Eric=imread('D:\ONLINE WORK\725331\assignment\Training\Eric_Happy.jpg','jpg');

Eric=rgb2gray(Eric);

Eric=imresize(Eric,[N N1] );

figure(3),imshow(Eric,'Initialmagnification','fit');

title('Eric')

% Subject4-Gauta

Gauta=imread('D:\ONLINE WORK\725331\assignment\Training\

Gautam_Happy.jpg','jpg');

Gauta=rgb2gray(Gauta);

Gauta=imresize(Gauta,[N N1] );

figure(4),imshow(Gauta,'Initialmagnification','fit');

title('Gauta')

% Subject5-Ghaida

Ghaida=imread('D:\ONLINE WORK\725331\assignment\Training\

Ghaida_Happy.jpg','jpg');

Ghaida=rgb2gray(Ghaida);

Ghaida=imresize(Ghaida,[N N1] );

figure(5),imshow(Ghaida,'Initialmagnification','fit');

title('Ghaida')

10

%% Student Registration Details

% Group Members and their respective IDs

close all

clc

N=40; %size of the image

N1=30;

M=10; % 4 student faces

%% Part 1: Resizing and Reading Images

% Subject1-Dhara

dhara=imread('D:\ONLINE WORK\725331\assignment\Training\

Dhara_Happy.jpg','jpg');

dhara=rgb2gray(dhara);

dhara=imresize(dhara,[N N1] );

figure(1),imshow(dhara,'Initialmagnification','fit');

title('dhara')

% Subject2-Diksha

diksha=imread('D:\ONLINE WORK\725331\assignment\Training\

Diksha_Happy.jpg','jpg');

diksha=rgb2gray(diksha);

diksha=imresize(diksha,[N N1] );

figure(2),imshow(diksha,'Initialmagnification','fit');

title('dhara')

% Subject3-Eric

Eric=imread('D:\ONLINE WORK\725331\assignment\Training\Eric_Happy.jpg','jpg');

Eric=rgb2gray(Eric);

Eric=imresize(Eric,[N N1] );

figure(3),imshow(Eric,'Initialmagnification','fit');

title('Eric')

% Subject4-Gauta

Gauta=imread('D:\ONLINE WORK\725331\assignment\Training\

Gautam_Happy.jpg','jpg');

Gauta=rgb2gray(Gauta);

Gauta=imresize(Gauta,[N N1] );

figure(4),imshow(Gauta,'Initialmagnification','fit');

title('Gauta')

% Subject5-Ghaida

Ghaida=imread('D:\ONLINE WORK\725331\assignment\Training\

Ghaida_Happy.jpg','jpg');

Ghaida=rgb2gray(Ghaida);

Ghaida=imresize(Ghaida,[N N1] );

figure(5),imshow(Ghaida,'Initialmagnification','fit');

title('Ghaida')

10

% Subject6-Haoyang

Haoyang=imread('D:\ONLINE WORK\725331\assignment\Training\

Haoyang_Happy.jpg','jpg');

Haoyang=rgb2gray(Haoyang);

Haoyang=imresize(Haoyang,[N N1] );

figure(6),imshow(Haoyang,'Initialmagnification','fit');

title('Haoyang')

% Subject7-Huipei

Huipei=imread('D:\ONLINE WORK\725331\assignment\Training\

Huipei_Happy.jpg','jpg');

Huipei=rgb2gray(Huipei);

Huipei=imresize(Huipei,[N N1] );

figure(7),imshow(Huipei,'Initialmagnification','fit');

title('Huipei')

% Subject8-Hung

Hung=imread('D:\ONLINE WORK\725331\assignment\Training\Hung_Happy.jpg','jpg');

Hung=rgb2gray(Hung);

Hung=imresize(Hung,[N N1] );

figure(8),imshow(Hung,'Initialmagnification','fit');

title('Hung')

% Subject9-James

James=imread('D:\ONLINE WORK\725331\assignment\Training\

James_D_Happy.jpg','jpg');

James=rgb2gray(James);

James=imresize(James,[N N1] );

figure(9),imshow(James,'Initialmagnification','fit');

title('James')

% Subject10-Neel

Neel=imread('D:\ONLINE WORK\725331\assignment\Training\

Neel_Happy.jpeg','jpg');

Neel=rgb2gray(Neel);

Neel=imresize(Neel,[N N1] );

figure(10),imshow(Neel,'Initialmagnification','fit');

title('Neel')

%% reading test faces- 5 sleepy students

% Subject1-Haoyangtst

Haoyangtst=imread('D:\ONLINE WORK\725331\assignment\Testing\

haoyang_sleepy.jpg','jpg');

Haoyangtst=rgb2gray(Haoyangtst);

Haoyangtst=imresize(Haoyangtst,[N N1] );

figure(11),imshow(Haoyangtst,'Initialmagnification','fit');

title('Haoyang_Test')

% Subject2-Huipeitst

11

Haoyang=imread('D:\ONLINE WORK\725331\assignment\Training\

Haoyang_Happy.jpg','jpg');

Haoyang=rgb2gray(Haoyang);

Haoyang=imresize(Haoyang,[N N1] );

figure(6),imshow(Haoyang,'Initialmagnification','fit');

title('Haoyang')

% Subject7-Huipei

Huipei=imread('D:\ONLINE WORK\725331\assignment\Training\

Huipei_Happy.jpg','jpg');

Huipei=rgb2gray(Huipei);

Huipei=imresize(Huipei,[N N1] );

figure(7),imshow(Huipei,'Initialmagnification','fit');

title('Huipei')

% Subject8-Hung

Hung=imread('D:\ONLINE WORK\725331\assignment\Training\Hung_Happy.jpg','jpg');

Hung=rgb2gray(Hung);

Hung=imresize(Hung,[N N1] );

figure(8),imshow(Hung,'Initialmagnification','fit');

title('Hung')

% Subject9-James

James=imread('D:\ONLINE WORK\725331\assignment\Training\

James_D_Happy.jpg','jpg');

James=rgb2gray(James);

James=imresize(James,[N N1] );

figure(9),imshow(James,'Initialmagnification','fit');

title('James')

% Subject10-Neel

Neel=imread('D:\ONLINE WORK\725331\assignment\Training\

Neel_Happy.jpeg','jpg');

Neel=rgb2gray(Neel);

Neel=imresize(Neel,[N N1] );

figure(10),imshow(Neel,'Initialmagnification','fit');

title('Neel')

%% reading test faces- 5 sleepy students

% Subject1-Haoyangtst

Haoyangtst=imread('D:\ONLINE WORK\725331\assignment\Testing\

haoyang_sleepy.jpg','jpg');

Haoyangtst=rgb2gray(Haoyangtst);

Haoyangtst=imresize(Haoyangtst,[N N1] );

figure(11),imshow(Haoyangtst,'Initialmagnification','fit');

title('Haoyang_Test')

% Subject2-Huipeitst

11

⊘ This is a preview!⊘

Do you want full access?

Subscribe today to unlock all pages.

Trusted by 1+ million students worldwide

1 out of 15

Your All-in-One AI-Powered Toolkit for Academic Success.

+13062052269

info@desklib.com

Available 24*7 on WhatsApp / Email

![[object Object]](/_next/static/media/star-bottom.7253800d.svg)

Unlock your academic potential

Copyright © 2020–2026 A2Z Services. All Rights Reserved. Developed and managed by ZUCOL.